Windows 11 is About to Get Its AI Moment (Premium)

- Paul Thurrott

- Feb 08, 2024

-

8

As Windows 11 lurches into 2024 with rumors of a Moment 5 update, there are far bigger and more important updates to come. And it all starts with Windows 11 version 24H2, which I think will deliver Windows’ long-awaited AI moment.

First things first.

Nothing is certain these days, but Moment 5 should arrive in preview form as soon as February 27, on the normal Week D schedule, about two and a half weeks from now. By all accounts, it will be lackluster, a small collection of new features that includes small improvements to Notepad, Copilot in Windows, Nearby Share, Windows 365, and the like. The only interesting changes will be limited to the EMEA since this is where Microsoft will allegedly start implementing the changes required by the EU Digital Markets Act (DMA). Changes that, ahem, should be made available to all of Microsoft’s customers.

Moments and the monthly features that Microsoft seems to burp out to users randomly and without warning aren’t all that interesting, aside from the “it would be funny if it weren’t so troubling” fact that many of these updates are never tested before being foisted on the public. I am much more interested in 24H2, which we once thought of as Windows 12. And not just because it will represent another round of major updates for my Windows 11 Field Guide: I live in Windows 11 all day every day, and I’m curious to see how or if the AI advances that Microsoft is making in other parts of the company will ever impact this platform in a major way.

There’s been precious little evidence of that so far. Yes, Microsoft rushed Copilot into Windows 11—and then, in a bit of PR tap-dancing, Windows 10—last year, force-installing it on as many users as possible. And it’s now routinely updating this unfinished and unrefined sidebar to bring it up to what the old Microsoft would have called ship quality. (With basic UI nonsense like this little update.) And yes, there are several AI-based features spread across Windows 11, like too little butter scraped across too much toast. (Tip of the hat to Tolkien for that turn of phrase.) Things like background removal and Cocreator in Paint, background blur and removal in Paint, text recognition in Snipping Tool, and the like.

These new features are all very nice, and useful. But they also fall into the monthly random new features bucket, and like Copilot, they were all rushed to market. Worse, none of them take advantage of the AI hardware acceleration afforded by the Neural Processing Units (NPUs) that are now available in a growing collection of new PCs. This is a classic chicken/egg problem, in which new AI features need to be good enough to justify PC upgrades, and enough modern PCs need to be in the market to justify making those features more sophisticated. Or something.

We’ll get there. But for all the changes we’ve seen in Windows 11, all the constant churn, it’s amazing how little has changed in the day-to-day. The capabilities Microsoft gives away for free in Copilot are incredible, and the perks I get in Copilot Pro are well worth the $20 I pay each month. But none of that is specific to Windows. When does Windows get to shine?

It’s been a long time coming. Just over a year ago, I wrote about the future of Windows and how I expected Microsoft to infuse all of its products with AI. At the time, the software giant had just launched what we now call Copilot—hilariously as a feature of its woeful Bing search engine, though that was later corrected—and CEO Satya Nadella had promised to add ChatGPT-like AI capabilities to “every Microsoft product.” But we had little to go out.

So I discussed the following possibilities.

The next major release of Windows would be called Windows 12. This one is still up in the air, of course, but after a year of waiting for news about this release—any news—it appears that this release will likely be called Windows 11 version 24H2 for all the obvious reasons instead. So I’m using the 24H2 name here.

24H2 might require an NPU. This came to me while during an off-the-cuff conversation during an episode of Windows Weekly, but the idea has some grounding: Microsoft artificially limited the number of PCs that could upgrade from Windows 10 to 11, after all. And it’s possible that this unprecedented change was made in part to prepare the user base for a similar requirement for the next release, one based on an NPU chip being present in the PC. Obviously, there are two more likely scenarios: Some 24H2 features could require an NPU, as Windows Studio Effects does today, or some features could simply work better/faster when an NPU is present. Either way, the concept is the same. These requirements are designed to trigger a PC upgrade cycle that would benefit Microsoft and its PC maker partners.

What if AI is the next .NET? Microsoft’s desire to “AI all the things,” as I put it, reminded me of a similar initiative it tried in the early 2000s when it was going to “.NET all the things.” That effort failed hard, in part because of shifting executive ranks and priorities, and the diminished power and attention of Bill Gates. But with AI, of course, we have a more singular focus and a natural extension of Microsoft’s cloud computing initiatives, which led to it being one of the most powerful companies on earth. Microsoft isn’t going to drop the ball this time.

Windows is the logical center for AI on the client because cloud-based AI is expensive. No one was really talking up hybrid AI a year ago—a combination of AI in the cloud and AI on the client—but I theorized that the expense of cloud-based AI would make Windows more important to Microsoft again because it is the logical place for the most powerful hybrid AI experience, whether we’re talking about features in Windows or features in Microsoft 365 or third-party apps. “AI could make Windows relevant again,” I wrote. “Not just from inertia or for performing legacy tasks. But for getting anything done. It could become the key to how we are productive at work in the future.”

I still love that last bit. More importantly, I still believe it’s true. And in the wake of a year’s worth of amazing AI advances from Microsoft and others, and a few recent events tied to other Microsoft products, I’d like to look out at the year ahead and see what, if anything, has happened in the past year to give us hope for the future of AI in Windows.

There is. In a rare burst of prognostication, I wrote in that year-ago article that we “might see a shift to an AI-centric world in which we ask Windows to perform some task, and it uses some combination of Edge, Bing, Word, Excel, and whatever other Microsoft tools to pull together a hybrid document of whatever kind, and the user wouldn’t need to know much if anything about any of those apps or services to make it happen.”

What I’m describing there is orchestration, and if you read Ask Paul each Friday, you may know that I have repeatedly referenced Stevie Batiche’s keynote appearance at Build 2023 last May during which he explained in stunning clarity how Microsoft would implement AI across its stack using three AI “application structures.” These structures are how we get from here to there.

The first of these structures is the copilot, a new user experience that sits beside current (legacy) applications and provides help. Copilot in Windows and the Copilot and Image Creator from Designer apps in the Microsoft Edge sidebar are perhaps the most well-known of these solutions.

The second structure is AI inside, new solutions that are architected specifically around AI capabilities. Here and elsewhere, Stevie has cited Clipchamp and Designer as examples of this new application structure, where AI infuses the application from the inside out, “changing the interaction model completely.” From a user experience perspective, these types of applications can deliver an astonishing array of functionality using surprisingly little user interface, instead of being top-heavy with individual commands like the traditional Office suite. Inside apps help mainstream users become like experts, he says, because AI is in the app.

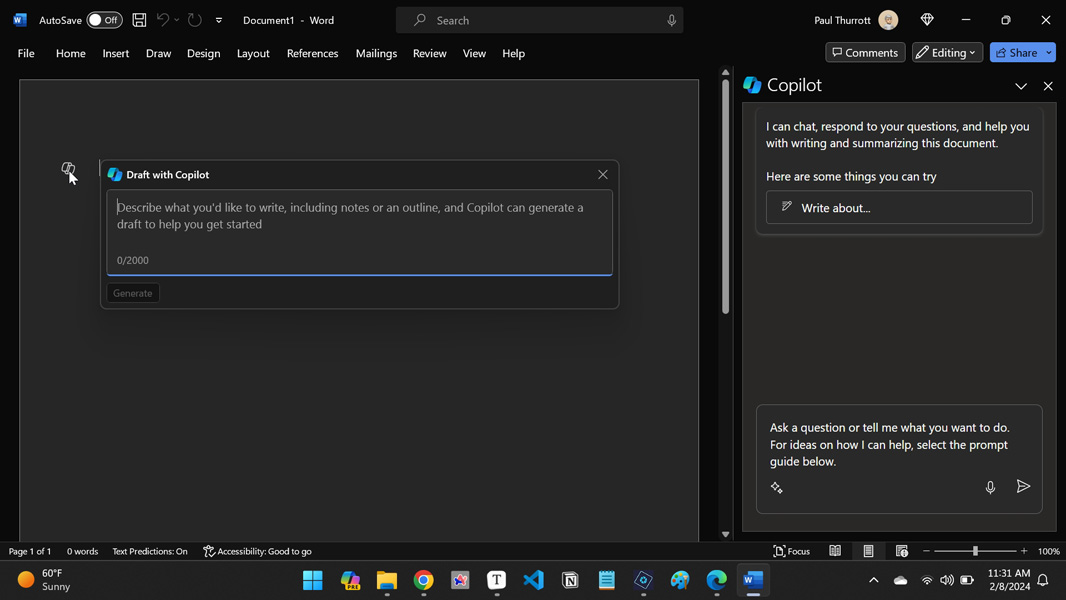

From personal experience, I can confirm that Clipchamp delivers on the promise noted above. But those who subscribe to Copilot Pro, as I do, or Copilot for Microsoft 365 can experience a unique set of AI capabilities that bridge or include those first two application structures. When you use Microsoft Word, for example, you can enable a Copilot sidebar that quite explicitly delivers the first copilot application structure. But Copilot capabilities also expose themselves inline while you’re writing, and I believe this capability represents an early example of an AI inside application structure.

This is an important point: These three application structures—I’ll get to the third in a moment—can co-exist, and they don’t represent three specific stages of evolution from a timeline perspective. That is, we’re not going to move from a world of only copilots to a world of only AI inside apps. We will instead mix and match between the three.

Which brings us, of course, to the third application structure, which Stevie calls AI outside, where AI is orchestrating multiple applications and services on your behalf to complete discrete jobs.

Here’s how Stevie explained it at Build.

“Here, the AI will orchestrate across the multiple apps, plug-ins, and services, functioning more as an agent,” he said. “If you take a step back, the Windows shell itself is an orchestrator. In fact, maybe one of the most powerful orchestrators across apps, across content, across the [Microsoft] Graph. Imagine with AI and natural language, you start to see glimpses of the opportunity with [Copilot in Windows]. And it is here when you get intelligence that is functioning not just at granular details, but at the higher levels where you get a mixing of both tactics and strategy, you get both vision and execution. It’s like a Copilot of Copilots, a very powerful application structure.”

A Copilot of Copilots. Interesting. And an apt term we might use to describe Windows itself, as it evolves in 24H2.

And Stevie has said as much. In an interview last year, he noted that “the best orchestrator of all is Windows itself. [It’s] a thing that is sitting there that allows a vessel for work to be done across many, many applications, both the amazing first party apps that we’re developing along with third-party apps. That’s the future.”

You can’t get more explicit than that. Windows, the best orchestrator of all, will evolve to use AI to orchestrate multiple apps, Copilot plug-ins, and services to act as an agent on the user’s behalf to complete complicated tasks with multiple steps. That, I think, is the vision for 24H2.

Stevie continues.

“What we understand at Microsoft is the next computing form factor isn’t a specific thing, it’s a system. Software will stitch together your experiences across anything that you want, anything that you might own across all your devices, whether it’s on your lap, in your pocket, or on your wrist. And that’s the big opportunity for software in front of us, connecting those experiences, connecting that data, connecting what you need to do. And you have an agent essentially alongside you to help you complete your work. That’s the vision.”

That’s the vision. Can Microsoft implement even a basic version of this in 24H2. I think it can because the hard work is happening elsewhere. It’s happening in the apps, Copilot plug-ins, and services that I keep mentioning. But let’s get more specific. We need more AI inside apps that expose AI functionality, not just to the user interacting with them, but in some standard way that can be automated by an AI agent. We need more of the Copilot plug-ins that are already starting to appear on the Microsoft Copilot website and, presumably, for ChatGPT, since those things are cross-compatible. And online and local services that can likewise plug into this new system. And then we need capabilities in Windows that can tie it all together. And expose this functionality via some user experience that is logical and familiar.

Copilot in Windows is the most obvious place for this functionality, especially in its initial implementation. And God help us, but Microsoft CEO Satya Nadella actually suggested as much when he infamously referred to Copilot as a new Start button during a Qualcomm event last year.

“The marquee experience for us is going to be Copilot,” he said. “When … Windows first came together, we had the Start button. The Copilot is like the Start button. It becomes the orchestrator of all your application experiences. So, for example, I just go there and express my intent and it either navigates me to an application or it brings the application to the Copilot. So it helps me learn, query, create, and completely changes, I think, the user habits.”

Remember that he said this after Microsoft had shipped Copilot in Windows, and that this sidebar then, as now, offers just limited integration functionality. You can use it to access only a short list of features and configure a few settings, things like enabling dark mode or taking a screenshot. Clearly, there are bigger plans for the future, much bigger. But it occurs to me that we should view the current list of integration capabilities as the first step to this future. For example, when you issue Copilot in Windows a prompt like mute volume today, it responds as a digital personal assistant would by noting that it understood the command and making sure that’s what you want. When you click “Yes,” it does your bidding.

Copilot today can already engage in conversations when creating textual or image content, ongoing interactions in which you supply follow-up commands or questions to hone the output until you get what you want. This is similar to how digital assistants evolved, too. But it’s not difficult to image a future in which Copilot in Windows evolves further to include functionality that a digital assistant might call a routine in which it—waits for it—orchestrates the completion of a task by implementing several steps in turn. And that as the number of apps, plug-ins, and services that work with Copilot expands and gets more sophisticated, that Copilot in turn will get more sophisticated as well. And we will arrive at that third application structure on Windows. In other words, Windows will orchestrate our work for us.

It will be interesting to see what form(s) this takes. Today, we treat apps as a sort of “whack-a-mole” experience in which we launch specific apps to accomplish certain tasks. And projects, workloads that comprise multiple tasks, typically involve multiple apps and services. What will it look like as we move past the individual app and service level? Previous attempts at this, like the document-centric UI in Windows 95 and the hubs in Windows Phone, failed, after all. Is AI the secret sauce they didn’t have to be successful? I think so, but it’s not clear.

There’s more. What about hybrid AI features that involve the NPU? And what about paid products like Copilot Pro and Copilot for Microsoft 365? Will paying Microsoft improve the experience? And if so, how? There are so many moving pieces.

And again, we have to ask ourselves how much is even possible in 24H2. And while it’s easy to be cynical, too easy if you’ve suffered at the inept hands of the Windows team recently as I have, it’s important to put this in perspective. The past year has seen an explosion in Microsoft AI capabilities across the stack, and that explosion will continue unabated this year. And Microsoft’s senior leadership, including the CEO who, yes, personally orchestrated this company-wide pivot, have specifically called out Windows as being core to this work. This is happening. And I think we’ll see a big chunk of it in 24H2.

This is a future I can embrace. It can’t happen quickly enough.

Gain unlimited access to Premium articles.

With technology shaping our everyday lives, how could we not dig deeper?

Thurrott Premium delivers an honest and thorough perspective about the technologies we use and rely on everyday. Discover deeper content as a Premium member.