Google Delivers a Metric Ton of AI to Developers at I/O 2024

- Paul Thurrott

- May 14, 2024

-

1

During its Google I/O 2024 keynote this morning, Google announced a wide range of initiatives for developers who wish to leverage AI in their apps and services. And of broader interest, the online giant is bringing the on-device Gemini Nano model to the desktop versions of its Chrome web browser, following earlier rollouts on the Pixel 8 Pro and Samsung Galaxy S24 series smartphones.

“AI is fundamentally changing what we build and how we go about building it,” Google vice president Jeanine Banks said. “At Google, our mission is to make AI accessible and helpful for every developer by providing you with the tools to innovate in this new reality.”

There’s a lot to discuss here, but Google’s plan to deliver on-device, hardware-accelerated AI capabilities will immediately and directly impact the most people. This work utilizes WebGPU and Web Assembly(WASM), and it was done in partnership with Google’s Chromium partners as well as third-party browser makers like Apple and Mozilla to ensure that users have a consistent hybrid AI experience no matter which browser they choose.

For its own browser, Chrome, Google is using a fine-tuned version of its Gemini Nano AI model so that developers can target high-level APIs for translation, captioning, transcription, and other tasks that can happen on-device. The firm is also partnering with app developers on web apps that can take advantage of Gemini in Chrome. For example, Adobe will enhance the web version of Photoshop with hardware-accelerated features using Gemini in Chrome.

Google is also improving the developer experience in Chrome with Gemini by adding AI insights—such as explaining errors, suggesting causes, and providing fixes—directly to the Chrome Developer Tools console. There are new APIs for speculation rules tied to page pre-fetching and pre-rendering and multipage apps that together “deliver near-instant, seamless page transitions that redefine the possibilities of web app interactions for all developers,” Google says.

Other key developer announcements today include:

Gemini 1.5 Flash and Gemini 1.5 Pro. Gemini 1.5 Flash is new to the Gemini family of models, and as its name suggests, it’s focused on speed. It’s available today in preview, provides the same multimodal input capabilities as other Gemini models, and is optimized for low latency, narrow tasks. For those that need more general or complex multistep reasoning with the highest quality, Google is also releasing Gemini 1.5 Pro in preview, its biggest update since the original Gemini launch in December 2023. Gemini 1.5 Flash and 1.5 Pro will soon support a 2 million context window, and developers can join a waitlist to access that capability.

New Gemma models. Google is adding new members to the Gemma family of open models: In addition to CodeGemma for code completion and generation and Recurrent Gemma for efficient memory usage, developers can now access PaliGemma for multimodal vision-language tasks. And Gemma 2 will deliver even better performance, with its 27 billion parameter version outperforming models twice its size.

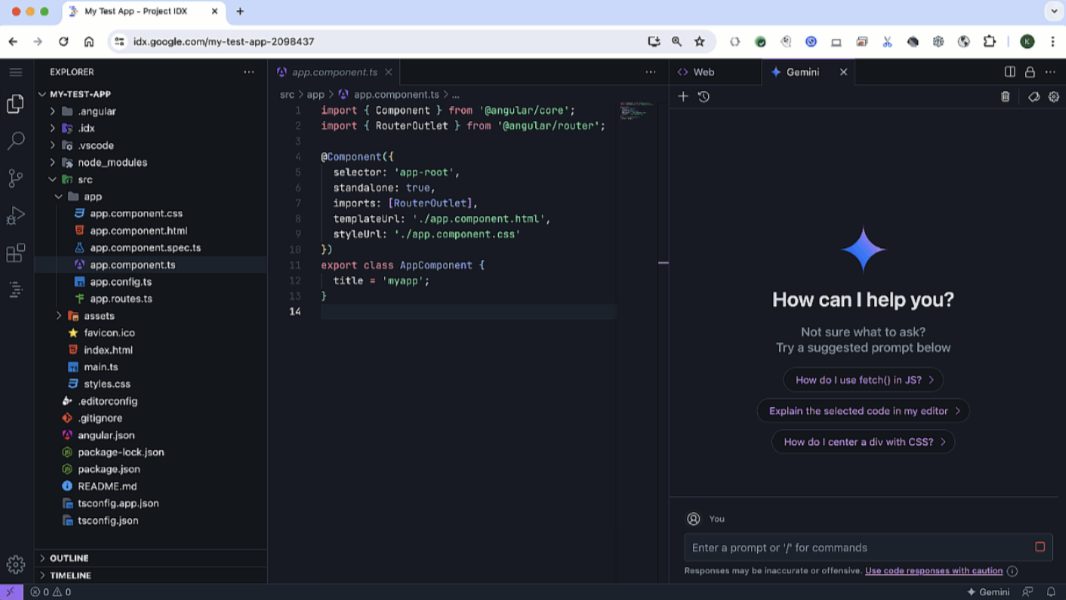

Project IDX in open beta. Google’s Project IDX web-based AI development environment has been locked behind a waitlist since its initial release last August, but it’s now in open beta and available to everyone. Developers can import existing projects and create new template-based projects, and the IDE now supports Chrome DevTools, Lighthouse, and Cloud Run integrations.

Flutter and Dart support for WASM. Google has released Flutter 3.22 and Dart 3.4 with preview support in stable for compiling web apps to WASM, delivering performance improvements of 2x to 3x when compared to web apps compiled to standard JavaScript. Dart 3.4 also includes a preview version of the Dart macros system that supports serializing and deserializing JSON data.

Checks. Google’s AI-powered compliance platform is now generally available, giving Android and iOS developers automated privacy compliance reports with actionable insights into your app’s data collection and sharing practices. A Checks Code Compliance personal coding assistant is available now in private preview (and coming soon to all developers) to analyze source code in real time to identify potential compliance issues. Also, a Checks AI Safety service, also in private preview, helps developers build safer generative AI applications with automated adversarial testing.

More AI experiences for Android apps. In addition to Checks, Google recently released Studio Bot in stable, added it to the Gemini family of products, and added Gemini support to Android Studio. Later this year, Gemini in Android Studio will support multimodal inputs using Gemini 1.5 Pro, and Gemini Nano will support additional Android devices beyond Pixel 8 and Galaxy S24 series. And Jetpack Compose is being updated with AI-powered stylus handwriting recognition, Jetpack Glance, a Resizable Emulator, Compose UI check mode, and more.

Firebase GenKit. This new open source framework is built for JavaScript/TypeScript (with Go support coming soon) and it helps developers create Node.js backends so they can more easily integrate AI-powered features into new or existing apps using LLMs and related services. It supports Google Gemini and open source models via Ollama in its initial release, plus Vector Databases like Chroma, Pinecone, Cloud Firestore, and PostgreSQL (pgvector).