What Did Microsoft Really Concede with Recall? (Premium)

- Paul Thurrott

- Jun 08, 2024

-

2

The knee-jerk overreaction to Microsoft Recall exposed the worst in all of us, but at least Microsoft is doing the right thing with Recall. After thoroughly reviewing the information it provided at the Copilot+ PC launch and comparing it to changes it promised this past week, I feel better than ever about this feature.

I’m sure you all know the story: Microsoft announced Recall as the key new “breakthrough AI experience” that will be exclusive to Snapdragon X-based Copilot+ PCs at the firm’s May 20 Copilot+ PC launch event. I had two immediate reactions as I watched this part of the presentation unfold. Here was Microsoft, 20 years later, still trying to solve the problem of finding documents and other information instantaneously. And two, privacy advocates were going to have a field day with this.

Microsoft Recall solves a very real problem: It’s difficult to find documents and other information that we previously accessed on our PCs. Part of the issue is tied to so-called “data silos,” where information is spread out between files, email messages, chat threads, web browser tabs, and other locations. And part of it is that PCs, to date, have forced us to adapt to how they work, rather than the reverse.

But we’re not here to discuss functionality. We’re here to discuss privacy and security. And trust.

Recall took up less than 6 minutes of the 1 hour and 8 minute Copilot+ PC launch event, and so Microsoft’s explanation of why customers should trust this feature was decidedly light. Yusuf Mehdi made only three explicit claims related to privacy and security:

“We’ve built Recall with responsible AI principles and aligned it with our standards.”

You can read about Microsoft’s principles and approach to responsible AI on its website. At a high level, Microsoft says it develops AI systems responsibly and in ways that warrant people’s trust. More to the point, responsible AI must take into account how the system might work when used in ways its makers didn’t intend. And it must take privacy and security into account. This is all delightfully vague.

“We’re going to keep your Recall index private and local and secure on just the device.”

Recall requires a powerful NPU because it uses multiple on-device models at the same time, all running in the background, to do its work without harming system performance or battery life. But the on-device nature of Recall also requires data storage. And Microsoft never explained how that data will be “private, local, and secure” and stay “just on the device.”

“We won’t use any of that information to train any AI models.”

This is a key claim for any private AI solution these days, and it should be reassuring to users: Not only is the AI that’s processing your data and information only available on-device, what happens on your PC stays on your PC. Nothing is fed into Microsoft’s broader AI efforts, anonymized or not.

“We put you completely in control with the ability to edit and delete anything that has been captured.”

Here, again, vagueness won the day: Microsoft often claims that its customers are in control, but certain behaviors in Windows 11 undermine those claims via harassment in the form of “suggestions” aimed at getting you to allow Microsoft to track your activities more aggressively, and by enabling features like OneDrive Folder Backup silently behind your back after you’ve explicitly denied that request multiple times.

All these claims were understandably met with suspicion and even derision. But Microsoft also provided documentation for Recall online that provided the details we needed.

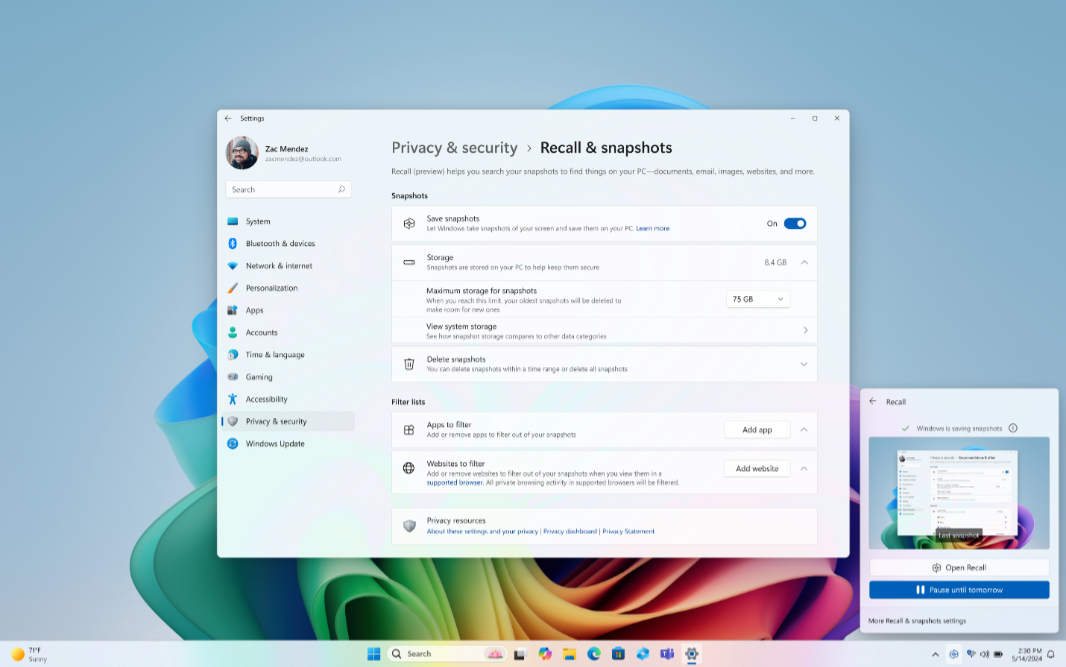

Retrace your steps with Recall. This Microsoft Support article explains how Recall creates snapshots–frequently misunderstood to just be screenshots–and analyzes them using something called screenray locally on the PC. It describes the Recall user interface and how search results can filtered by apps, text matches, and visual matches. It explains how you can pause or resume snapshotting, exclude specific apps and websites, and manage your snapshots and disk space usage. That you can delete specific snapshots, all snapshots, or a range of snapshots. This goes a long ways toward addressing the “you’re in control” bit.

Manage Recall on Microsoft Learn describes the corporate policies that commercial customers can use to manage Recall, though there’s only a single DisableAIDataAnalysis policy at the moment.

Privacy and control over your Recall experience. This Microsoft Support document continues the “you’re always in control” discussion by explaining that you will be prompted about Recall during the initial setup of your Copilot+ PC. (More on that below.) It describes how a Recall app shortcut will be pinned to your Taskbar, and that a Recall snapshot icon in the system tray will inform you when Windows is saving snapshots. It explains that you can disable snapshots in the Settings app or pause snapshots with the system tray icon. It also discusses how snapshots are encrypted using whatever full-disk encryption method your Windows install supports (Device Encryption in Windows 11 Home, BitLocker in Pro or higher.) That Recall snapshots are not “shared” with other users signed in to the same PC. And that Microsoft cannot access or view the snapshots. It also explains that Copilot+ PCs are Secured-core PCs with a Microsoft Pluton security processor and Windows Hello Enhanced Sign-in Security (ESS), a “more secure sign-in that uses biometric data or a device-specific PIN.” ESS “provides an additional level of security to biometric data by leveraging specialized hardware and software components, such as Virtualization Based Security (VBS) and Trusted Platform Module 2.0 to isolate and protect a user’s authentication data and secure the channel by which that data is communicated.”

New Windows 11 features strengthen security to address evolving cyberthreat landscape. This excellent David Weston blog post provides even more detail about the additional security protections provided by Copilot+ PCs. It notes that these Secured-core PCs provide advanced firmware safeguards and dynamic root-of-trust measurement to help protect from chip to cloud. That Pluton helps protect credentials, identities, personal data, and encryption keys, making it significantly harder to remove, even if a cyberattacker installs malware or has physical possession of the PC. And that Windows Hello ESS provides more secure biometric sign ins and eliminates the need for a password.

Copilot+ PC features FAQ. This documentation notes that “Recall is currently in preview status; during this phase, [Microsoft] will collect customer feedback, develop more controls for enterprise customers to manage and govern Recall data, and improve the overall experience for users.” It warns that snapshots can’t hide sensitive information like passwords or account numbers. And it clearly states that snapshots are only available to the person whose profile was used to sign in:”If two people share a device with different profiles, they will not be able to access each other’s snapshots.”

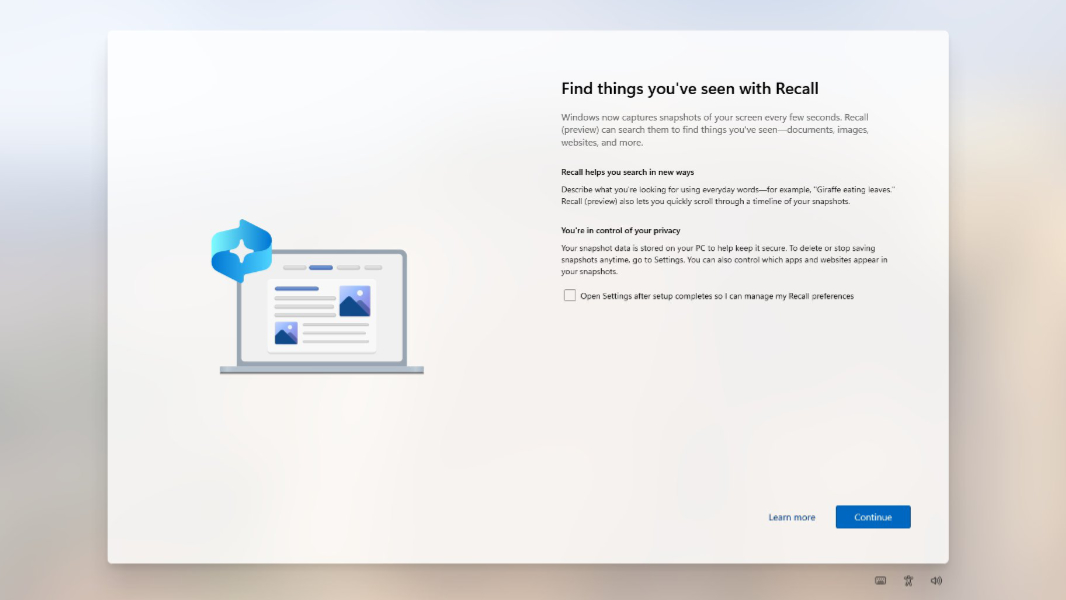

These descriptions, all available the same day as the launch, collectively led to my first debunking of the Recall criticisms in the concisely titled Windows 11 Recall is Not a Privacy Concern. But I reported that “Recall is an opt-in experience” because that’s what Microsoft told me. Others used the quote “optional experience.” And when we finally got our first peek at the initial Setup screen that Microsoft describes here (noted above and found at the top of this article), the differences between “opt-in” and “optional” became clearer.

This is how Microsoft described (and still describes) it:

“During setup of your new Copilot+ PC, and for each new user, you’re informed about Recall and given the option to manage your Recall and snapshots preferences. If selected, Recall settings will open where you can stop saving snapshots, add filters, or further customize your experience before continuing to use Windows 11. If you continue with the default selections, saving snapshots will be turned on.”

In other words, Recall was not initially an opt-in experience. It was enabled by default, but could be disabled later, which fulfills the “optional experience” tag line. During initial setup, you could check an option called “Open Settings after setup completes so I can manage my Recall preferences.” You could also just find those preferences in Settings later by navigating to Privacy & security > Recall & snapshots. There, you would find the large “On/Off” switch I expected Microsoft to provide during initial setup.

With the criticisms mounting, I wrote my second Recall editorial, Microsoft, Please Address the Recall Concerns Immediately (Premium), and explained that the security researchers and others who had hacked Recall to work on non-Copilot+ PCs had proven nothing, since normal Windows 11 PCs don’t have the above-described security controls that are either unique to or at least enabled by default on Copilot+ PCs. Put simply, these people were lying.

I want to be clear about this: Everyone who wrote a post claiming that they had “proven” that Recall was insecure was lying. It’s that simple.

This doesn’t mean that Recall was (or is) perfect. It doesn’t mean that vulnerabilities wouldn’t be found later when we could test this feature on real Copilot+ PCs. It means only that these sensationalist stories are just that, stories. And that any later issues we discover wouldn’t retroactively prove that these people had made a point.

“If I have one complaint about Recall … it’s that the option to ‘opt-in’ during initial Setup is the same kind of dark pattern language Microsoft uses elsewhere,” I wrote. “To be fair to Microsoft, that’s partially less problematic because the PC is fully secured before you even see this screen. But this is still not OK: There should be an On/Off switch, and Recall should literally be disabled by default.”

With that in mind, let’s take a fresh look at what Microsoft conceded yesterday when it provided an update on Recall that addressed feedback it received.

Getting past the reiteration of previous points (most noted above), Microsoft corporate vice president Pavan Davuluri said that Microsoft would:

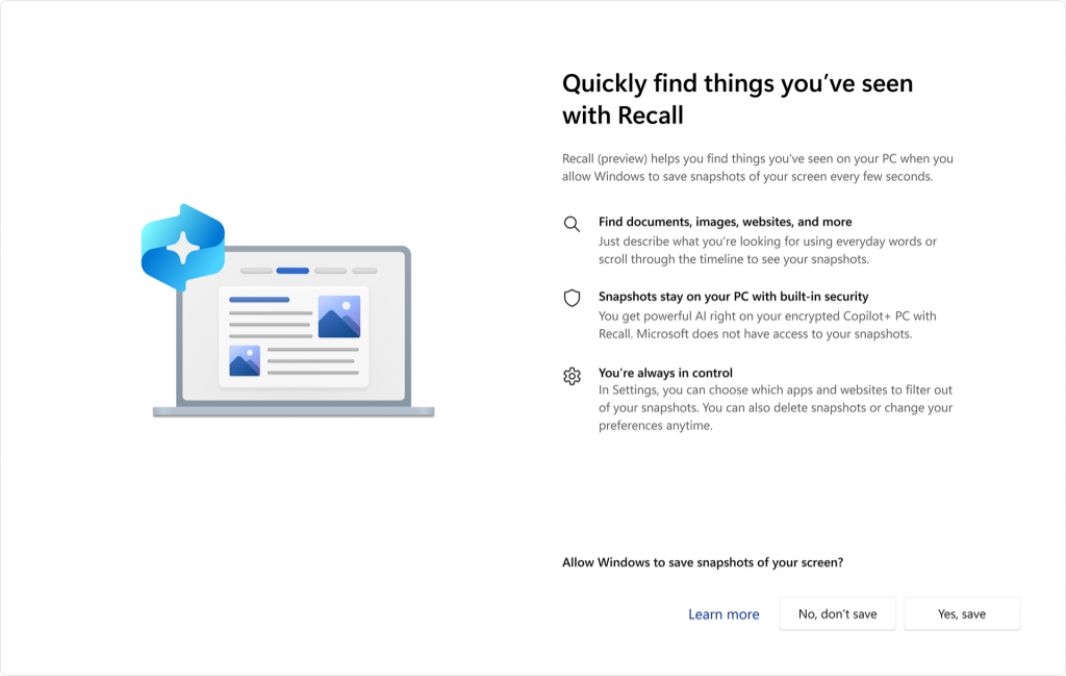

Update the initial setup experience to specifically address my complaint about opt-in/optional and dark patterns language usage. “We have heard a clear signal that we can make it easier for people to choose to enable Recall on their Copilot+ PC and improve privacy and security safeguards,” he writes. We are updating the setup experience of Copilot+ PCs to give people a clearer choice to opt in to saving snapshots using Recall. If you don’t proactively choose to turn it on, it will be off by default.” Recall will now be opt in as it should have always been. Here’s the redesigned setup screen:

Windows Hello enrollment is required to enable Recall. In addition, proof of presence is also required to view your timeline and search in Recall. Windows Hello enrollment was always required to enable Recall: Recall requires a Microsoft account (MSA) sign in and Windows 11 requires all MSA sign-ins to enable Windows Hello. Nothing new there. But the second sentence is unclear: I don’t know what Davuluri means by “proof of presence” being required, but I assume it means that you will need to authenticate against Windows Hello before viewing your timeline or searching Recall.” If so, that is new.

We are adding additional layers of data protection including ‘just in time’ decryption protected by Windows Hello Enhanced Sign-in Security (ESS) so Recall snapshots will only be decrypted and accessible when the user authenticates. In addition, we encrypted the search index database. Windows Hello ESS was always a requirement for Copilot+ PCs and thus for Recall, but the “just in time” decryption bit is also tied to in-place authentication: Now, when the user tries to view a snapshot for the first time, they will need to authenticate against Windows Hello first, and if that is successful, the snapshot will decrypt. So this, too, is new.

Davuluri also explains that “per-user encryption ensures even administrators cannot view other users’ snapshots,” debunking the claims by those who hacked Recall onto normal Windows 11 PCs. And he clarifies how Recall will work on managed PCs: IT admins can disable the ability to save snapshots, but they cannot enable snapshot saving on your behalf. “The choice to enable saving snapshots is solely yours,” he says.

In short, Microsoft did make concessions, but not to the liars and fearmongers. Instead, it addressed the real issues with Recall. As far as I’m concerned, making Recall truly opt-in during initial setup is a major win for common sense and decency, and a nice retort to Microsoft’s usual behavior of using dark patterns to trick customers into making bad decisions. It’s impossible not to be happy about this, though this is tempered by the fact that it’s also an exception and not the norm.

And that’s the bigger issue here. Microsoft wanted us to “love” Windows 10, but then it gave us little reason to do so. Now it wants us to trust Windows 11. But trust, like love, is earned, not just given away freely. And it needs to be earned again and again, over time. Microsoft has never achieved this bar with Windows 11. And while these changes to Recall are a nice start, that’s all they are. A nice start.

It’s a good day, and we are right to celebrate. But there is more work to be done before Microsoft or Windows 11 can be trusted.

Gain unlimited access to Premium articles.

With technology shaping our everyday lives, how could we not dig deeper?

Thurrott Premium delivers an honest and thorough perspective about the technologies we use and rely on everyday. Discover deeper content as a Premium member.