More Pixel Photo Love, More Pixel AI Angst (Premium)

- Paul Thurrott

- Aug 26, 2024

-

0

![]()

We have friends in town for a long weekend. This is distracting, but it also gave me a good chance to test the cameras on the Pixel 9 Pro XL in a fairly natural way. We were out and about over the past few days, visiting some new sights in Pennsylvania (to us, at least) and eating out more. And during the downtime, I started exploring the growing collection of AI services that Google now provides with its phones.

That these things are intertwined is somewhat obvious: As I wrote recently in Google Pixel 9 is at the Nexus of Hardware, Software, and AI (Premium), the history of Pixel is, in many ways, the history of Google’s use of AI as a differentiator for its mobile devices. And the first and most popular way it’s expressed that expertise is through computational photography. And there’s almost too much going on in both cases with the Pixel 9 series. It’s a bit overwhelming.

What’s curious about that, to me, is that you could almost ignore it all. Aside from a few pop-ups to remind you about new features like Gemini Advanced–which Pixel 9 series customers get for free for one year, a $240 value–it’s possible for someone to buy one of these devices, get it set up and configured, and just go about their lives normally, using apps, sending emails, marking text messages as spam, and taking photos, without really thinking about AI or how it’s quickly being sprinkled throughout the system. It’s just … kind of there.

And who knows? Perhaps it would reveal itself to the user, in time, as needed. I assume so. But I can’t wait for that. After a few days of trying to organize in my mind, by which I mean in a Notion list, what’s new from an AI perspective in the Pixel 9 series, our friends arrived, and away went the weekend. I took hundreds of photos. And I started my first experiments with the Pixel’s AI capabilities, most notably those related to photo editing.

People are going to freak out. A lot.

Last year, some of the less sophisticated tech reviewers lost their minds because of the AI photo editing features in the Pixel 8 series smartphones. What a difference a year makes: The AI advances this year are so extreme we’ll look back at 2023 and the Pixel 8 as the good old days. This is both good and bad, of course. You know, like all technology.

That said, the initial round of photos I took delivers nothing but good news: Google is deservedly suspect because of the up-and-down reliability of various Pixel devices over time. But it is likewise deserving of admiration for the quality of the photos anyone can take with a Pixel. This was true when the hardware was lacking, as it was for many years, and it’s even more true–truer, I guess–now that it has terrific camera hardware. If what you’re looking for is point-and-click perfection, the Pixel 9 Pro XL–and, I’d imagine, any 9-series Pixel–is your best bet. The iPhone is terrific, too, but it requires a lot of configuration to get there.

You can, of course, configure the Pixel 9 series to your heart’s content as well. I wrote a bit about this last year in Loving the Google Pixel 8 Pro Camera Upgrade (Premium), and the basics are the same.

The Camera app lets you move easily between Photo and Video modes, and the Pro mode controls are just one tap away. The Photo/Video Settings pane is likewise a tap away. Not much has changed on the photo end of that, and there are still separate General and Pro settings there. But the Video Settings interface has a lot more going on now, with a single settings list and many options to consider.

Among them is a new Video Boost option that will enhance the color, lighting, and stabilization of recorded video and enable 8K video recording. Video Boost feels like a 1.0 offering–it only works with videos “under a certain length” and can take a long time to do its work, reminiscent of basic post-shot photo enhancement on the underpowered Pixels of the past. Unfortunately, I didn’t think to record any video over the weekend, so that’s something for future testing.

I make only a handful of photo configuration changes. I configure Night Sight to automatic, leave Top Shot on automatic, leave the timer disabled, stick with the standard 4:3 aspect ratio, and use the pixel-binning 12 MP resolution JPEG file format. I temporarily enabled a new (to me) Guided Frame option, but the audio cues were beyond annoying, so I quickly killed that. But I did enable Frequent Faces, Framing hints (with a Golden ratio grid type), Rich color in photos (Display P3 format), and Ultra HDR.

In use, the default zoom buttons–.5x, 1x, 2x, and 5x–meet my needs most of the time and the Pixel is particularly well suited for manual/non-optical zoom up to 10x to perhaps 20x. (It can go to 30x, but at that point, a tripod or other stabilization is a must and the results will still be painterly and sometimes weird.) Out in the world, as we were on a train trip to and from Jim Thorpe on Sunday, or eating out, as we did all weekend, in lighting both perfect and incredibly dark–like the dungeon of an old jail, with almost no lighting–the Pixel 9 XL Pro delivered the goods. There were a few misfires–an errant mis-tap leading to poor focus, perhaps, possibly user error–and many examples of photos that were immediately satisfying. This is what I expect from Pixel.

But what about the AI?

As noted previously, this has long been a selling point for Pixel, but Google has really ramped it up with the 9 series. Among other things, it’s giving away one year of Gemini Advanced, which includes 2 TB of Google One storage, and one of the first things I did was fire up Google One to see how that would work: I am already paying for 2 TB of Google One storage, for example, at a cost of $99.99 per year. The Gemini Advanced offering is billed monthly, at $19.99 per month. And I was curious about the switchover.

There were no issues: My Google One subscription was switched over to what’s called AI Premium, and since my previous annual membership was set for a September 10 renewal, it just set September 10, 2025 as the expiration date for my updated subscription. I haven’t done too much with this yet, but pressing and holding on the Power button now brings up the Gemini pane, which indicates that I have Gemini Advanced and whatever extra functionality that entails.

Among that functionality is Gemini Live, which Google showed off at its Made by Google event earlier this month. This is basically a conversation mode for Gemini chats that works a bit like the Google Assistant–in that you speak and then it speaks back to you, by default–but more sophisticated. The first time you use it, you’re prompted to choose between several available voices, each of which is pretty natural sounding.

If you’re too shy to speak with Gemini this way, you can of course use the standard text-based prompt interface, and Gemini, like Microsoft Copilot, offers various suggestions to help get you started. I did a bit of both, using my standard set of test prompts, and didn’t find any surprises. That said, some prompts–like a travel itinerary–make more sense in text than voice. It didn’t help that Gemini mispronounced many of the Mexico City place names in my itinerary prompt. It did, at least, save a text-based version of the itinerary as well.

For photo editing and image generation, there are some basics and some more radical offerings.

If you’ve used Google’s photo editing features before, you’re likely familiar with things like Magic Eraser, which will examine a photo and suggest items to remove and then let you manually circle or highlight items to remove. I used this to remove a smudge mark from the window of a train that marred an otherwise OK photo, and that type of thing is simple to use and effective.

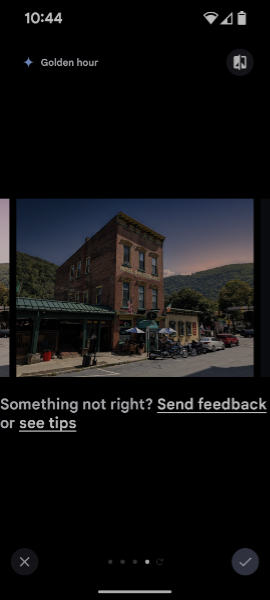

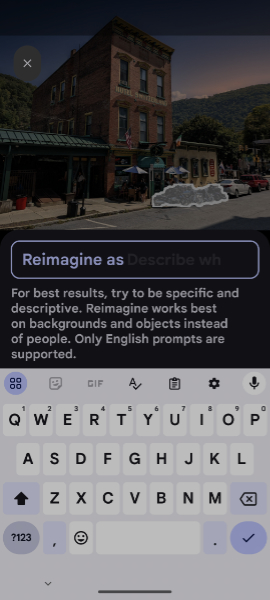

Other features, like Portrait Light, Portrait Blur, and Photo Unblur likewise perform post-processing to improve the quality of your photos after they’re taken. But Google has expanded on that with generative AI capabilities now. The Magic Editor lets you move, resize, or modify items in a photo. And with the Pixel 9 series, there’s a new Reimagine feature that is rather incredible. When you tap this button while looking at a photo, you can choose between three contextual presets–Auto frame, Sky, and Golden Hour–and it will generate variations of that photo accordingly.

If you find one you like, you can save it. But you can so keep going, modifying the image further by circling, tap, or otherwise selecting items to erase (Magic Eraser) or … Reimagine. And this is where it gets weird. I selected some motorcycles in front of a building, tapped “Reimagine,” and was presented with a text prompt.

Here, I wrote, “[Reimagine as] a classic VW Beetle.” Gemini generated the images and gave me several choices, some more successful than others.

That’s fun, but Auto frame might be my favorite of the new photo editing features in Magic Editor: It provides generative fill capabilities that extend a photo–any photo, including those from the past–to either side, filling in details that the AI imagines. It can be quite effective. For example, my wife took this photo at a local farmer’s market a week earlier.

And then I used Auto frame to show more on the sides.

It’s not perfect–you can see some white onion artifacting on the green pepper to the left–but that can be easily fixed, I just didn’t notice it until later. I will absolutely use this feature.

One of the wildest new features is Add Me, which I’ve not yet tested. I will, though I find asking strangers to take group shots rewarding as they will often ask for the same in return, and it’s usually a fun interaction. And as I was discussing with my wife yesterday, for all the photos I take, I only rarely feel the need to edit them.

But that could change: These features are so useful that even somewhat acceptable shots can benefit from a quick pass of AI magic. For example, this shot had so many superfluous people in it, I ended up just taking a second shot.

But when I was looking at this later, I decided to experiment with an AI solution to the problem. Between the people it suggested removing and the few I added manually, the result is a cleaner shot that even removed the ugly power lines. That’s pretty amazing, though it probably needs some touch-up.

I can’t but think about the alarmist stories I read last year when the Pixel 8 series arrived. And that I was particularly annoyed that The Washington Post was so concerned about the AI capabilities that it wrote multiple fearmongering posts about it, but never bothered to even review the phones. (I’m not exaggerating here, one of them was literally titled “Google Pixel’s ad campaign is destroying humanity.”)

I hadn’t heard from the Chicken Littles at WaPo yet on Pixel 9 series, so I took a look. There’s nothing about the photography functionality, oddly, and there’s no review, of course. But there is a post about the new Pixel Screenshots app, which works like a limited and more manual version of Microsoft Recall. So I guess that’s AI the outrage du jour this year.

The publication at least explains, correctly, that this is an opt-in feature and that it, like Recall, only works on-device, so it’s private and secure. But get this: They like it, Mikey, they like it.

“Compared to the current crop of AI tools embedded into smartphones — like text rewriters, image manipulators, and audio transcribers — this attempt to give phones a kind of visual memory could be surprisingly useful in daily life,” Chris Velazco writes. “The feature can pull useful tidbits from new screenshots you take, but by default, it can’t look at older ones saved in services like Google Photos. (You can, however, manually add older images to the Pixel Screenshots app if you really wanted to.) The app itself admits that its responses ‘may be inaccurate,’ though it gives you an option to double-check its answers.”

And that’s why I’m not super-interested in Pixel Screenshots: This kind of feature is more useful if it happens automatically, as with Recall. I guess it’s better than nothing.

I need to discuss Pixel Studio before moving on. This one is very interesting.

Yes, it’s an image-generation app. Yes, it uses the Gemini Nano multimodal language model on the device to create images. Yes, that seems pretty straightforward. And it can be, though limitations from the local-ness of this solution abound, from the non-photographic quality imagery to the constant misspellings, even when I specify exactly the words I want.

Pixel Studio is clear that it will not use people for image generation. But some have pointed out that this app can generate disturbing imagery whether it’s wanted or not. And while I will spare you the few examples I’ve seen, it can get a little weird. This is the type of thing I meant when I noted last week that our big AI choices on mobile now are slow and steady (Apple) and the Wild West (Google). This is what the Wild West looks like.

Honestly, after Google’s over-reaction to the over-reaction to Gemini image generation earlier this year, this level of freedom is almost welcome. But yeah. It needs to be scaled back a bit. As in all things, there’s a happy medium out there somewhere between the two extremes.

I have a lot more testing to do. But the most useful AI features in the Pixel 9 Pro XL so far map very closely to my general take on AI, that it’s like MSG for software, something that makes what we already have better. In this case, that’s photo editing for the most part. And those new features make sense in context, building on what we had before and giving us more control than ever over the photos we take. It’s pretty great.

As for Pixel Studio, Gemini Live, and the rest, we’ll see. I’m glad I’m not paying for any of that, but I’m happy to tag along and see how it works and how it improves over time. But I don’t see any of this becoming core to my mobile experiences.

Gain unlimited access to Premium articles.

With technology shaping our everyday lives, how could we not dig deeper?

Thurrott Premium delivers an honest and thorough perspective about the technologies we use and rely on everyday. Discover deeper content as a Premium member.