Google Photos Gets Improved Search and Experimental ‘Ask Photos’ Feature

- Laurent Giret

- Sep 05, 2024

-

1

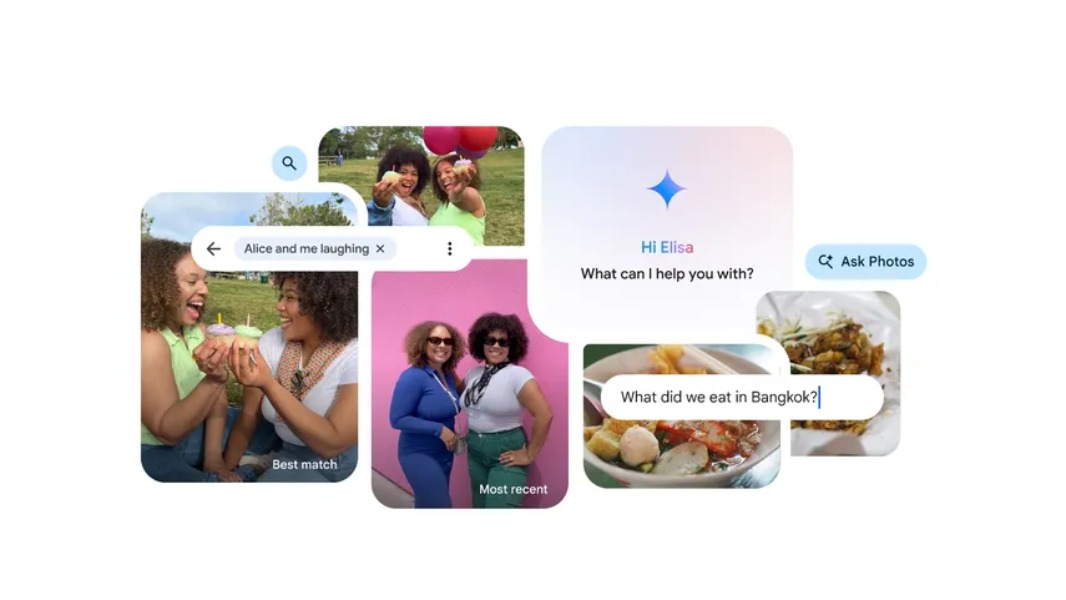

Google is improving the search experience in its Google Photos app by allowing users to type more detailed queries. The company is also trialing a new ‘Ask Photos’ conversational search experience that leverages Google’s Gemini AI.

“As photo libraries get larger and larger, finding what you’re looking for sometimes requires more descriptive queries. Starting today, you can find what you’re looking for using everyday language,” explained Jamie Aspinall, Group Product Manager, Google Photos.

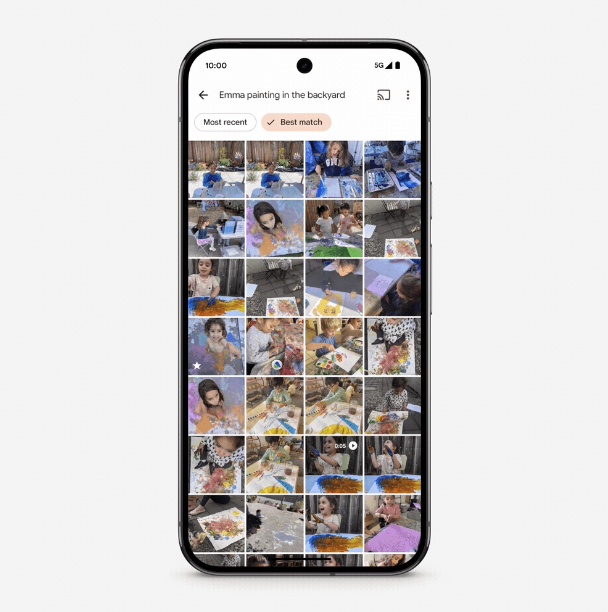

The improved search experience is now rolling out to all English users on iOS and Android, with support for languages coming soon. In practice, Google Photos users can now use queries like “Emma painting in the backyard” to search for specific photos, and results can be filtered by date or relevance.

Starting today, select users in the US can also try the experimental ‘Ask Photos’ feature in Google Photos. To try the new experience, Google Photos users will need to join the waitlist on the Google Labs website. ‘Ask Google’ is designed to better understand the personal context in users’ photo libraries, and the conversational experience will let users define what they’re looking for even more precisely.

“Ask Photos understands details, like where you took photos with your camping gear or what dish is sitting on the table in your picture at the restaurant, to give you the answer. And because Ask Photos is conversational, if it doesn’t find the right answer immediately, you can provide extra clues or details to nudge it in the right direction,” Aspinall explained.

Because users can share a lot of personal details in these natural language queries, Google says that it implemented strong privacy measures in this new ‘Ask Photos’ experience. As an example, queries can only be reviewed by humans after being disconnected from the Google accounts they’re coming from. The company will also be listening to user feedback before making it available to more users.