Meta Expands Teen Accounts to Facebook and Messenger

- Laurent Giret

- Apr 08, 2025

-

2

Meta announced this morning that it’s bringing new protections to Teen Accounts on Instagram, which include a new option to block the live streaming feature for teens under 16. The company is also expanding Teen Accounts to two of its other big platforms, Facebook and Messenger.

Instagram Teen Accounts launched back in September with new safety features to protect teenagers from strangers and potentially harmful content. Teenagers who create Instagram accounts automatically get a teen account, and those under 16 need a parent’s permission to change any of the security settings that are enabled by default.

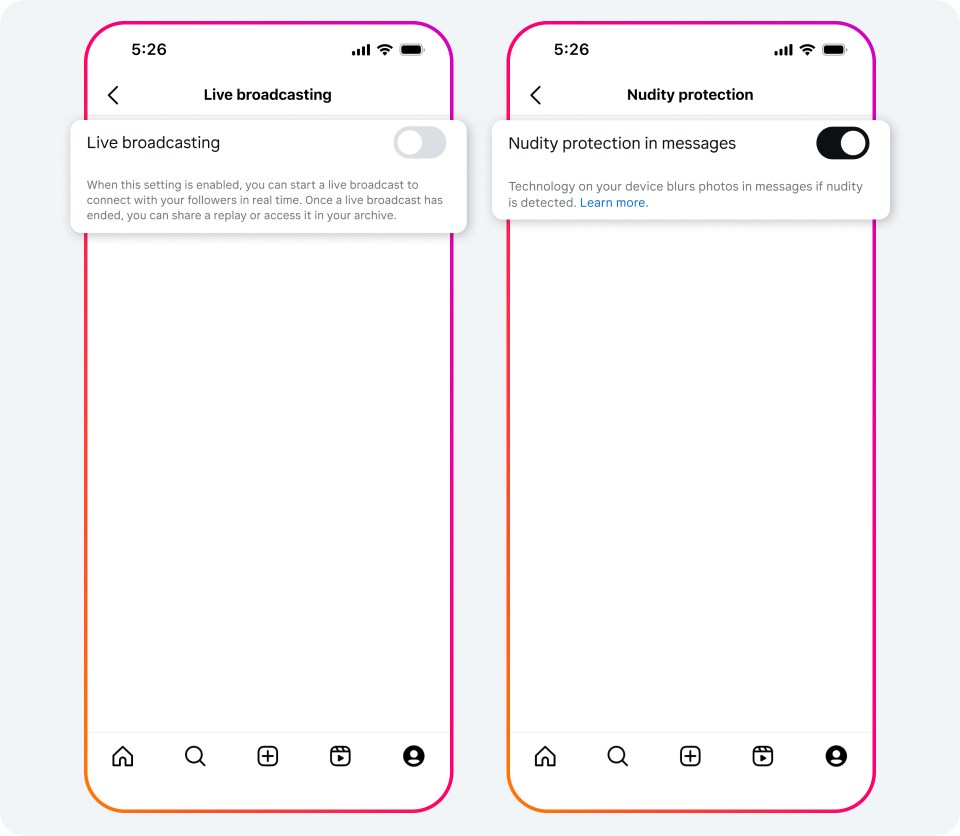

In the coming months, Instagram will automatically prevent teens under 16 from going live unless their parents allow it. The platform will also introduce another control to blur images containing suspected nudity in Instagram DMs, which will also be enabled by default for Teen Accounts.

Starting today, Teen Accounts are also available on Facebook and Messenger, beginning in the US, UK, Australia, and Canada. “Teen Accounts on Facebook and Messenger will offer similar, automatic protections to limit inappropriate content and unwanted contact, as well as ways to ensure teens’ time is well spent,” the company said.

In other Meta-related news, the company also ended its third-party fact-checking program in the US yesterday after announcing plans to do so in January. The company is replacing fact-check labels on posts with a new Community Notes system on Facebook, Instagram, and Threads. Just like on X/Twitter, these notes will be written and rated by users themselves, and Meta previously said that it expects these crowdsourced notes to be “less biased” because they will “allow more people with more perspectives to add context to posts.”