Now Microsoft Has an Agentic AI System for Finding Security Vulnerabilities Too

- Paul Thurrott

- May 13, 2026

-

1

Microsoft revealed today that it has created a competitor to Anthropic Mythos called MDASH, the Microsoft Security multi-model agentic scanning harness. It identified 16 of the Windows vulnerabilities that Microsoft fixed in this week’s Patch Tuesday updates.

“Unlike single-model approaches, the harness orchestrates more than 100 specialized AI agents across an ensemble of frontier and distilled models to discover, debate, and prove exploitable bugs end-to-end,” Microsoft vice president Taesoo Kim writes. “The results speak for themselves: 21 of 21 planted vulnerabilities found with zero false positives on a private test driver; 96 percent recall against five years of confirmed Microsoft Security Response Center (MSRC) cases in clfs.sys and 100 percent in tcpip.sys; and an industry-leading 88.45 percent score on the public CyberGym benchmark of 1,507 real-world vulnerabilities—the top score on the leaderboard, roughly five points ahead of the next entry.”

We already know that Mozilla has had incredible success fixing vulnerabilities in its Firefox codebase using Anthropic Mythos. And so it was only a matter of time before Microsoft did something similar. But as the firm explains, its codebases are massive and quite complex: Windows, Hyper-V, Azure, and the device driver and service ecosystems around them are private to Microsoft, so they’re not part of outside language models’ training. “On this surface,” Kim writes, “a model has to actually reason.”

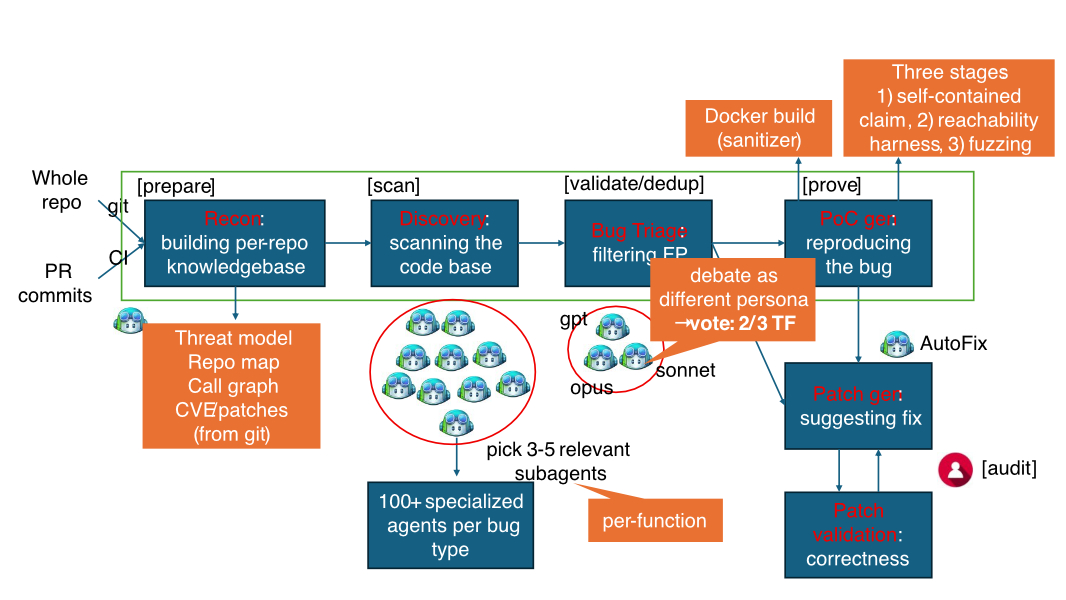

As Microsoft explains, MDASH is an agentic vulnerability discovery and remediation system that manages an ensemble of diverse AI models that examine a codebase and then output validated, proven findings. Its architecture is portable across model generations and it’s model agnostic so it can improve as the technology evolves.

“We are at a moment in the industry where AI-powered vulnerability discovery stops being speculative and starts being an engineering problem,” Kim continues. “The findings in this Patch Tuesday and the retrospective recall on five years of [previous vulnerability] cases are evidence that AI vulnerability findings can scale.”