Microsoft Announces its Maia 200 AI Accelerator for Datacenters

- Paul Thurrott

- Jan 26, 2026

-

2

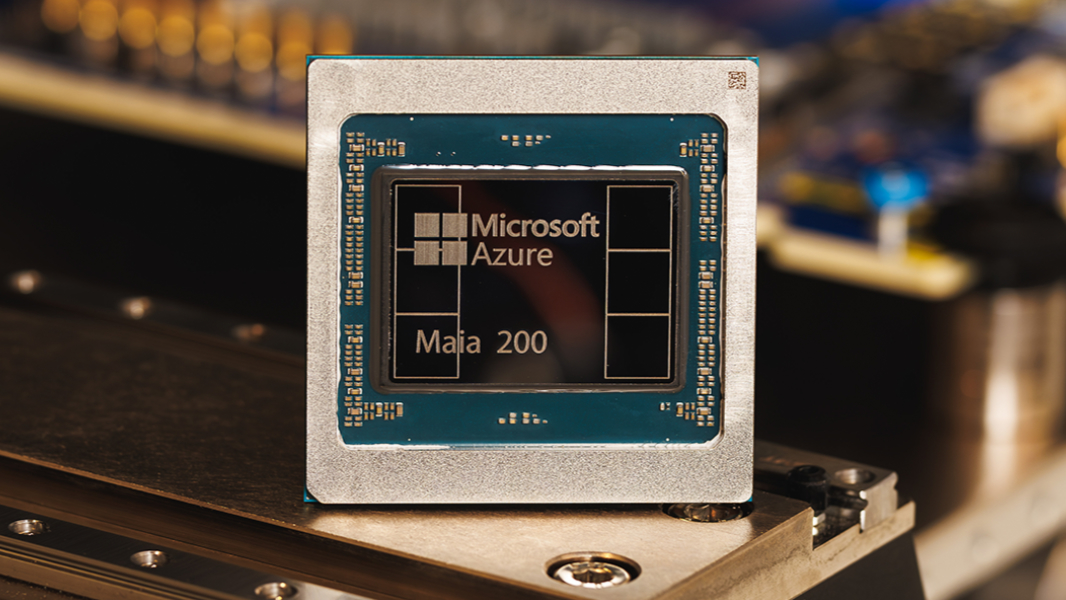

Microsoft today announced its second-generation Maia 200, an AI accelerator processor for datacenters that’s optimized for inferencing.

“Maia 200 is a breakthrough inference accelerator engineered to dramatically improve the economics of AI token generation,” Microsoft executive vice president Scott Guthrie explains. “Maia 200 is an AI inference powerhouse. It’s an accelerator built on TSMC’s 3 nm process with native FP8/FP4 tensor cores, a redesigned memory system with 216GB HBM3e at 7 TB/s and 272MB of on-chip SRAM, plus data movement engines that keep massive models fed, fast and highly utilized.”

Microsoft claims that its Maia 200 is now the most performant first-party silicon from any hyperscaler. It delivers three times the 4-bit floating point (FP4) performance of the third generation Amazon Trainium and exceeds the 8-bit floating point (FP8) performance of Google’s seventh generation TPU. Microsoft notes that Maia 200 is also the most efficient inference system it has ever deployed, with 30 percent better performance per dollar than the latest generation hardware in its datacenters today.

Microsoft says that Maia 200 will serve multiple models in its heterogeneous AI infrastructure, including the latest OpenAI GPT-5.2 models, and that this will bring a “performance per dollar advantage to Microsoft Foundry and Microsoft 365 Copilot.” And the Microsoft Superintelligence team will use Maia 200 for synthetic data generation and reinforcement learning to improve next-generation in-house models.

Maia 200 is already deployed in Microsoft’s Iowa datacenters, and the US West 3 datacenter region near Phoenix, Arizona and other regions will follow.