No (Local) AI Developer Left Behind? (Premium)

- Paul Thurrott

- Feb 11, 2025

-

1

Microsoft has some notable AI wins for developers, GitHub Copilot being key among them. But despite being announced 9 months ago, the Windows Copilot Runtime is still a no-show as I write this. That may finally be changing, though the value proposition of these technologies is unclear given the relatively small addressable market for on-device AI.

? A bit of recent history

Microsoft formally announced the Windows Copilot Runtime during Build 2024 on May 21, 2024. This was a surprise to me because there was no mention of it in the Book of News that Microsoft provided to press and bloggers ahead of the event. And Microsoft CEO Satya Nadella had previously uttered this term the day before at the Copilot+ PC launch without ever explaining what it was. So it’s been miscommunicated from the start.

“Every developer can take advantage of it,” Nadella said during the Copilot+ PC event, referring to what was then being positioned as a wave of innovation tied to Copilot+ PCs and their powerful and efficient NPUs. “What Win32 meant for the graphical user interface, the new Windows Copilot Runtime that we’re announcing today will be to AI.”

This statement is incorrect. At least twice.

Win32 was the standard set of application programming interfaces (APIs) that Microsoft provided to developers who wished to created native 32-bit apps for Windows, starting with Windows NT in 1993. But it was just a 32-bit version of the original Windows APIs, which were later renamed to Win16. So it was Win16, and Windows pre-NT, that kicked off the GUI era for the PC. And not to get too far off the rails here, but one might argue that Visual Basic provided an even more popular introduction to GUI programming for developers than Win16 or Win32 ever did. It was definitely a lot more accessible.

Also, the Windows Copilot Runtime is not to AI what Win32 or anything else was to the GUI. Instead, the Windows Copilot Runtime is exclusively about AI running locally on an NPU-powered Copilot+. It’s a subset of AI. And at the time of this writing, it constitutes an incredibly tiny minority of overall AI usage. The vast majority runs in the cloud, and the Windows Copilot Runtime cannot help developers with that.

⁉️ So what is this thing?

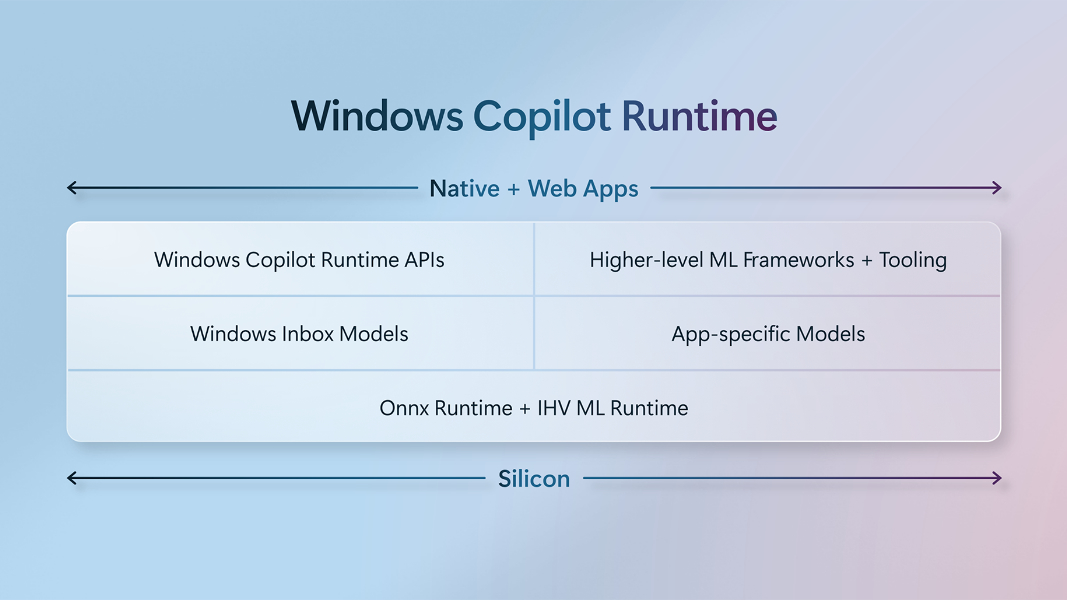

I’ve been trying to understand the Windows Copilot Runtime (WCR) since it was first announced, but I keep running into the same issue. The name doesn’t describe what it is. That is, it’s very much not a runtime. Apps do not run on top of a WCR runtime that runs on top of the standard Windows runtime, as .NET apps do (with the .NET runtime inserted between apps and the standard Windows Runtime in that case). The language Microsoft uses is confusing, in part because it’s commingling terms.

“A core element of our re-architecture of Windows, that’s something Yusuf [Mehdi] mentioned, is the Windows Copilot Runtime,” Windows lead Pavan Davuluri said later at the Copilot+ PC launch. “It is a powerful AI capability woven into every layer of Windows. The Windows Copilot Runtime includes more than 40 AI models [that] empower Windows experiences like Recall. It also provides the infrastructure that allows us to continuously update and maintain the quality of on-device models. All this functionality in the Windows Copilot Runtime makes it possible to reimagine what apps can do, speeding up the pace of innovation.”

This, too, requires a bit of explanation. As with the Nadella comments above, most of it is incorrect.

First, Yusuf Mehdi–who appeared in the Copilot+ presentation between Nadella and Davuluri–never mentioned the Windows Copilot Runtime. He did mention those 40+ on-device AI models, and though he mentioned developers, once, that was during the handoff to Davuluri.

“Woven into every layer of Windows” is a curious turn of phrase. I’m not sure how many layers there are in Windows, or even what “layers” means in this context. But if you think about apps utilizing frameworks and APIs and running on top of Windows, either directly (natively) or indirectly (using a runtime like .NET), I suppose there are at least a few layers, architecturally speaking. We would learn more about the WCR the next day at Build, as promised, but not in the keynote, which was heavily focused on cloud AI. But what was never said explicitly then was that most of the APIs it offers–via, wait for it, the Windows Copilot APIs–run on top of the Windows App SDK. Which is itself a combination of APIs and a framework for creating desktop apps. (In this sense, it runs “on top of” the Win32 API, but is far abstracted than, say, .NET.)

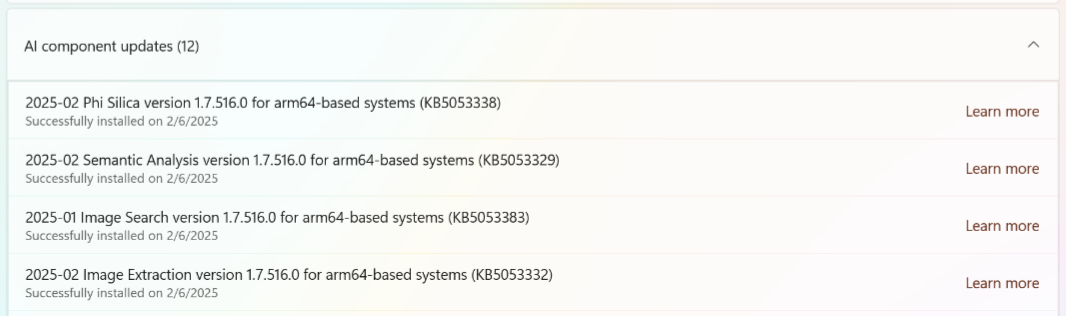

WCR does “include” a collection of AI models, what I would call small language models (SLMs), most of which are preinstalled on Copilot+ PCs and/or installed and updated as needed. But the “infrastructure” he mentions is just Windows Update. When you first run Recall, for example, you are prompted to open Windows Update and install four (it was originally three) of them. These models arrive in the form of cumulative updates (CUs) for Windows, and the infrastructure for installing and updating them is not in any way sophisticated. In fact, it’s a terrible user experience. Each of the models (CUs) appears independently in Windows Update, and you have to keep checking for updates until each is installed, one at a time. This is true for updates too, and Recall will just stop working until you’ve manually downloaded and installed each new or updated model.

Here are the three most recent of these updates in Windows Update. Every one of those “Learn more” links resolves to a “Sorry, page not found” on Microsoft Support.

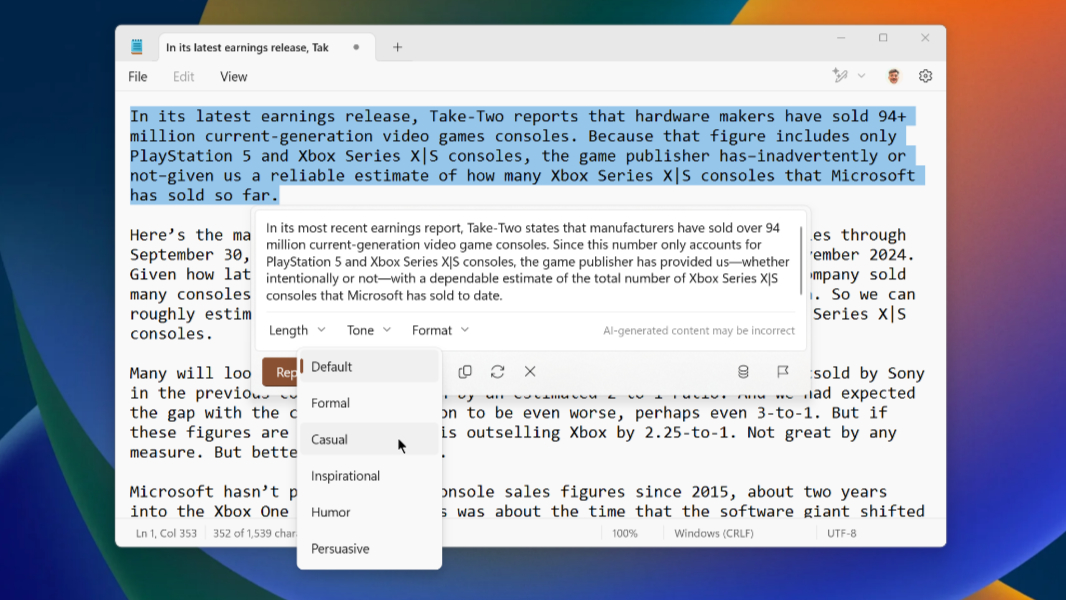

As for reimagining apps, one might argue that there’s little evidence of that happening in the real world. But Microsoft has updated some of its in-box Windows 11 apps, like Notepad, Paint, and Photos, with a growing set of on-device AI-based capabilities. And though much of this is still locked in the Windows Insider Program, this work will come to stable soon. And some of these features are truly useful and interesting. The text summarizing and rewriting capabilities in Notepad (admittedly, still available in preview and not yet in stable) being one of my favorite examples.

In any event, we left the Copilot+ event confused about a lot of things, and the WCR was one of those things. But the next day, at the Build 2024 keynote, Microsoft expanded on this information and provided more details.

? The day after

The Build 2024 keynote was an hour and fifteen minutes of dense AI content, all of its cloud-based. Microsoft’s CEO and others talked up Microsoft 365 Copilot, Copilot Pages, Agents, the AI stack, Azure AI Foundry, and even a curious little cloud-connected Windows 365 device called Windows 365 Link. But Copilot+ PC was mentioned only in passing, and despite Build being a developer show, ostensibly, the Windows Copilot Runtime wasn’t mentioned even once.

Microsoft did discuss Copilot+-specific, meaning local AI, features separately. Things like Recall, Click to Do (which at the time was only going to be available inside Recall), a Windows Search experience that still hasn’t shipped, and, of course, the WCR, which developers could use to harness the underlying AI capabilities that are unique to AI and Copilot+ PCs. Some would allegedly be “no code” experiences, though it wasn’t clear what that meant.

“Windows Copilot Runtime (WCR) is a reliable platform for developers to create innovative experiences more securely and efficiently, regardless of where they are on their AI development journey,” a blog post attributed to Pavan Davuluri reads. “Windows Copilot Runtime encompasses AI frameworks and tool chains that enable developers to integrate their own on-device models into Windows, utilizing robust client silicon such as GPUs and NPUs.”

“WCR includes a set of APIs that are powered by over 40 on-device models included with Windows,” the post continues. “Phi 3.5 Silica, built from the Phi series of models, is optimized for the Snapdragon X series NPU in Copilot+ PCs, enabling text intelligence capabilities like text summarization, text completion and text prediction. Developers can access Phi 3.5 Silica API and Optical Character Recognition (OCR) API which recognizes and extracts text present within an image in Windows App SDK 1.7 Experimental 2 release in January.”

And there it is. January.

January?

Yes. In May 2024, Microsoft said that it would bring on-device AI capabilities to an experimental release of the Windows App SDK the following January, over 6 months later. So these capabilities would not ship in stable form until possibly a year after they were announced. Indeed, it’s reasonable to believe that Windows App SDK 1.7 will arrive at or around Build 2025, a few months from now.

?When will then be now?

As noted, I’ve repeatedly looked to see whether any WCR bits have been made available to developers. The Windows App SDK, which is Microsoft’s newest native apps platform for Windows developers and the successor to the woeful Universal Windows Platform (UWP), has stable, preview, and experimental channels. The stable release, as of this writing, is version 1.6.4. The preview channel is on 1.6 Preview 2. And the experimental channel that Davuluri referenced last May just moved to 1.7 Experimental3 last week. (The inconsistent naming is delightful.) So Microsoft missed that January milestone by some number of days. Which is honestly pretty good. But today, finally and for the first time, developers can start experimenting with the WCR features and APIs that Microsoft revealed last year.

These include.

Phi Silica. This is Microsoft’s small language model (SML), or what it calls local language model for providing text-based responses to text responses. It can be used to summarize text, rewrite text for clarity, and convert text to a table format. And it includes content moderation functionality to “classify and filter out potentially harmful content from being prompted to or returned.” You can learn more about Phi Silica support in Windows App SDK experimental here.

Text recognition (OCR). This API can “detect and extract text within images and convert it into machine-readable character streams,” where text is “characters, words, lines, and polygonal text boundaries” with associated confidence levels. You can learn more about Text recognition support in Windows App SDK experimental here.

Image super resolution. This functionality, which is seen today in the Photos app, is used to “increase the sharpness and clarity of an image and upscale the image up to 8x its original resolution.” You can learn more about the Image Super Resolution APIs in Windows App SDK experimental here.

Image description. This API can be used to generate a text description of an image with configurable with a short caption or a long description for users with accessibility needs. You can learn more about the Image Description API in Windows App SDK experimental here.

Image segmentation. This API can be used to identify and visually mask specific objects within an image. You can then perform specific actions on just that part of the image, though obvious choices like generative erase are not part of this API. (Perhaps they are available elsewhere, or will be.) You can learn more about the Image Segmentation API in Windows App SDK experimental here.

Beyond these, there are or will be APIs for Windows Studio Effects, User Activity (as used by Recall), Semantic Search (Recall, coming soon toe Windows Search), and Live Translations in Live Captions. And some non-AI features that will improve Windows App SDK otherwise related to windowing and notifications.

?Chicken, meet egg

The problems here are many. Aside from the slow-to-market issue–which, admittedly, Microsoft revealed upfront last May–WCR suffers from a classic issue that will ensure adoption remains minimal. By tying these features to only those PCs that support powerful local AI capabilities, e.g. Copilot+ PCs, Microsoft is artificially limiting their appeal. This is what ultimately sunk products like Windows Media Center and Windows Tablet PC: Because their unique functionality wasn’t broadly available, most developers simply ignored them.

Of course, we know how those stories ended: Those unique features were eventually rolled into mainstream Windows. I’ve said since I heard the term Copilot+ that it’s only a matter of time before all PCs meet the Copilot+ PC specifications, and that as that unfolds, the Copilot+ PC term will simply go away. But that could take years, and until then, why would any developer bother with these features when the potential audience is so small?

We will see exceptions, and some developers are already supporting powerful NPUs and their local AI functionality, especially with video editors and other content creation apps. But the better approach here is the orchestration capability I discussed last year. In keep with the very architecture of Windows, these APIs should lean on the NPU when present, but fall back to the GPU, CPU, or cloud when not. And that should be transparent to developers. It should be what the APIs do, abstracting the underlying hardware from the apps developers create.

On that note, it’s impossible not to think that that’s the next phase of this evolution, or perhaps something that occurs over several versions. of the Windows App SDK. Which, from what I can see, is evolving on a pretty slow schedule right now. When you consider how quickly AI is evolving, this feels almost irresponsible. But developer tools and APIs need time, and setting something in stone only to have it change months later is, if anything, even less defensible.

I’m curious to see what Microsoft says about all this at Build 2025. In the meantime, I’m going to see if I can’t whip up some quick demos of some of these WCR capabilities. But first, I need to figure out how to even use an experimental version of the Windows App SDK. As always, there are so many questions. (What about .NET? What about cloud AI? On and on it goes.)

Gain unlimited access to Premium articles.

With technology shaping our everyday lives, how could we not dig deeper?

Thurrott Premium delivers an honest and thorough perspective about the technologies we use and rely on everyday. Discover deeper content as a Premium member.