- Forums

- General Discussion

- Health of Tech

- Forum Post

Why do we install applications?

Yo, that’s the question I’ve been thinking about. I don’t know all the technicalities so that’s why I wanted to write something about it.

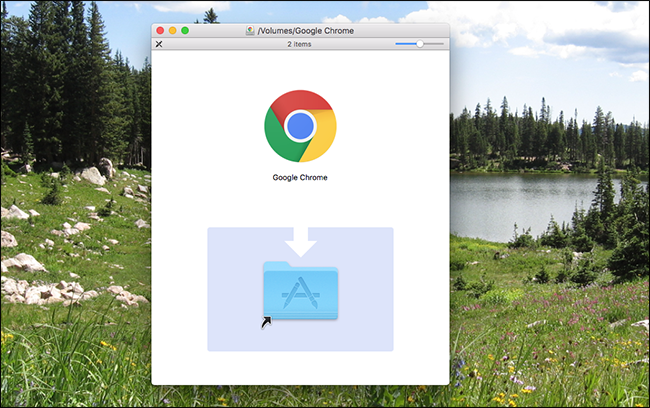

Windows has portable exe files. Mac has dmg files which are mounted once and the application is dragged to the Applications folder. Linux has AppImage which is similar to these.

I believe both exe and AppImage could act like a Mac equivalent. Depending on how the application is configured it could set up a user profile when launched for the first time. I don’t think that counts as installation.

I’m not talking about applications such as WindowBlinds or Groupy which require deep system integration. I’m talking about ordinary applications.

I have been using LibreOffice with extensions and dictionaries as AppImage on Linux and that experience was the same as using an installed version, but without the system integration.

I believe Apple figured this out long ago and you get complete system integration by just dragging a file to the Applications folder.

It’s almost like I want to get a Mac just to use this “magic” myself.

Both Windows and Linux are good at spreading files all over the system and I’m thinking why not do it like macOS?

One file (archive) = one application. No need to pollute the system with files everywhere.