As Google Pushes the Final Pixel 4 Update, a Look Back at What Went Wrong (Premium)

- Paul Thurrott

- Oct 04, 2022

-

6

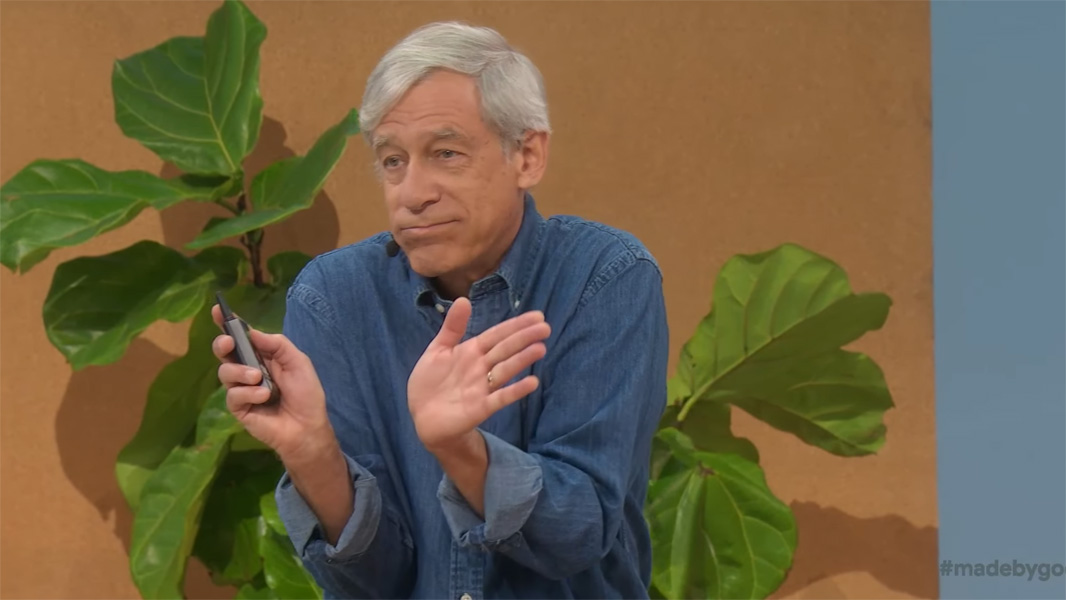

I was transfixed by Google’s Pixel 4 launch presentation, which featured an incredible lecture on smartphone photography by distinguished engineer Marc Levoy. Unfortunately, this event is where it all went south for Pixel, both as a brand and technologically.

How instrumental was Levoy in making Pixel the best smartphone platform for photography? Well, he led the Google Research teams that created computational photography wins like HDR+, Portrait Mode (which somehow created background blur with a single lens), and Night Sight, among other accomplishments. These are technologies that competitors later raced to duplicate, often taking years to rise to the quality that Pixel had previously achieved.

But the problem for Levoy—and for Pixel—is that he made one major blunder that set the platform back at a crucial moment. It’s reminiscent to me of a Steve Jobs decision in the very early 2000s to choose DVD over CD-R for the iMac at a time when the world wanted to create mix CDs: he choose telephoto over ultra-wide for the Pixel 4’s second lens.

Both decisions are classic examples of bad timing, but Apple, at least, scrambled to fix its mistake. Google? Not so much. In fact, the mistake set back Google so badly that the next generation and a half of Pixel handsets—including the Pixel 4a, Pixel 5, and Pixel 5a with 5G—featured no flagship-class products and didn’t push the needle on photography at all, giving Apple and Samsung the time they needed to push their own flagship smartphones into the lead, photographically. Pixel has never recovered.

To be fair, Pixel had more than its fair share is issues—hardware and software alike—before Levoy’s crucial mistake. But photography was the one thing that kept beleaguered Pixel fans—including me—coming back again and again for the otherwise buggy products.

Google’s computational photography prowess was described as the platform’s “secret sauce” at the Made by Google ’19 launch event at which the Pixel 4 debuted. And Levoy’s appearance at this event was incredible, a master class in which an expert at the height of his field descends from Mount Olympus to educate the masses and, in this case, explain why what Google was then doing was best-in-market.

The key to that success, of course, was computational photography, the “software defined camera,” as Levoy called it. And this success pointed very clearly to how Google’s advances in AI and other computer sciences reaped real-world benefits for its customers. Benefits that, in this case, they could see with their own eyes. The first three generations of Pixel smartphones, for example, handily outperformed its competition, despite Pixel sticking with a single lens—for the most part; the Pixel 3 XL actually had two front-facing lenses for some reason—while others started adding additional lenses. Google’s software was better than its competitor’s otherwise superior hardware.

There’s no reason to recap everything talked about that day, though I strongly recommend that anyone interested in smartphone photography watch this part of it—Levoy comes on at about the 47:00 minute mark—because everything he discusses applies to today’s smartphones too: you can see the genesis of many of the photographic advances that Apple and Samsung have made in the years since in this talk.

What I do want to focus on, however, and pardon the pun, is the mistake that Levoy and Google made with the Pixel 4. For the first time, this Pixel would include two (rear-facing) lenses, the main (wide) lens that graced its predecessors, and a new, second telephoto lens. As noted, Google’s competitors were also using multiple lenses by this point, but they had almost universally chosen an ultra-wide lens instead.

“Pixel 4 has a roughly 2x telephoto lens plus our Super Res Zoom technology,” he said of that configuration. “In other words, a hybrid of optical and digital zoom … you get sharp imagery throughout the zoom range.”

Impressive imagery followed, of course it did, with applause ringing out throughout the audience. But it was his comments about ultra-wide-angle lenses that caught my attention and introduced the first warning signs of what was to come.

“By the way,” he said, making an exaggerated gesture, “most popular SLR lenses do magnify scenes, not shrink them. So while wide-angle can be fun, we think telephoto is more important.”

He was wrong.

Pixel 4 did ship with four new computational photography features, putting Apple, Samsung, and the rest of the industry on notice: Live HDR+, white balancing, dual-camera Portrait Mode, and, perhaps most impressively, astrophotography. But Google’s emphasis on telephoto over ultra-wide set back the company, forcing it to belatedly (and temporarily) drop the telephoto lens in its next three handsets and quickly adopt ultra-wide. And it unfortunately chose the weakest ultra-wide lens imaginable, offering lower quality and a less-wide field of view than the competition. It’s a problem that still dogs the Pixel 6 family today and will continue to be an issue with Pixel 7. We’re talking about years of setbacks there.

“Despite having two rear camera lenses, in a first for a Pixel, the Pixel 4 XL lacks the ultra-wide-angle lens that the iPhone 11 Pro Max series and Samsung Note 11+ have,” I wrote in my initial review. “Google downplayed this omission at its launch event, but this omission is misguided: ultra-wide-angle shots are even more useful on the rear camera system than they are on the front, and having enjoyed this experience on other handsets, I really miss it here.”

“Google got a lot of attention at the Pixel 4 series’ launch because of the handsets’ astrophotography capability … but astrophotography is a niche feature, regardless, and not to beat this to death, I’d much rather have an ultra-wide lens,” I added several months later in a Pixel 4 XL follow-up.

In the years since Pixel 4, Levoy left Google, and Pixel lost a generation and a half of forward progress while Google readied its AI-focused Tensor microprocessor. And the Pixel 4 family and its successors continued the unfortunate tradition of suffering from many reliability issues, leading many—including me, eventually—to give up on the product line.

Today, Apple and Samsung still rule the smartphone market, but their computational photography capabilities are on-par with, if not better than, Google’s. More to the point, Apple and Samsung’s flagship smartphones offer better overall photography experiences. (And both have much better ultra-wide lenses, of course.) And making the mainstream options the best options for photography has pretty much killed any reason to own a Pixel, unless of course just really, really prefer a clean (but not stock) Android software image.

That’s too bad. And it could have gone very differently had Google simply done what Huawei did before the U.S. government destroyed the market for those smartphones and embraced both ultra-wide and telephoto (with a periscope lens, no less) at the same time. Instead, Google allowed the competition to run away with a market it once owned. And here we are.

By the way, if you do still own a Pixel 4 or 4 XL, I’m so sorry. But your final monthly software update is now available.

Gain unlimited access to Premium articles.

With technology shaping our everyday lives, how could we not dig deeper?

Thurrott Premium delivers an honest and thorough perspective about the technologies we use and rely on everyday. Discover deeper content as a Premium member.