Stevie Bathiche on AI Agents, NPUs, and the Future ⭐

- Paul Thurrott

- Sep 25, 2025

-

3

No one explains AI as clearly as Microsoft technical fellow Stevie Bathiche, so I was delighted to see him again this week at the Snapdragon Summit 2025 in Maui. He appeared in Qualcomm’s product announcement keynote, but I was also part of a small group that was able to pepper him with questions later that day. And you won’t be surprised to know that I asked him about my favorite AI topic, orchestration.

If you’re not familiar with Stevie, he’s one of the good guys. He’s one of the nicest and smartest people I’ve ever met at Microsoft, but I love his ability to communicate complicated topics in a straightforward manner. His explanations of AI app models and why the NPU matters so much are required watching/reading. So let’s start there.

? 2023: AI application structures

At Build 2023, Stevie gave one of the greatest talks I’ve ever witnessed. It’s so good that I’ve referenced it repeatedly. He explained that AI capabilities would roll out over time using three application structures:

- Beside applications. In this structure, you use AI next to existing (legacy) apps, as is the case with Copilot alongside, say, Microsoft Word and Edge. Basically a sort of sidebar, but literally a copilot.

- Inside applications. Here, AI is embedded in a new app (or a thoroughly overhauled existing app), resulting in simpler user interfaces without any loss of capabilities. He cited Clipchamp and Designer as examples.

- Outside applications. In this structure, AI capabilities are exposed as agents (services) that are controlled by an orchestrator. This orchestrator will evaluate user questions and commands and then determine which combination of AI models, apps, services, or whatever else can get it done. “It’s like a Copilot of Copilots,” he said, “a very powerful application structure.”

At the time of this talk, Microsoft was busy rolling out besides applications n the form of what’s now called Microsoft 365 Copilot and the Copilot sidebar in Edge, and it had announced that Copilot would come to Windows 11 later that year, and the initial release was literally a sidebar. And Clipchamp and Designer obviously existed too, so we had some basic inside applications to experience.

Outside applications are also happening now as Agentic AI, but over a longer period of time as capabilities are built out. That this is happening first in standalone applications—Perplexity Comet, an AI-powered web browser, is a good example, but you can also kick off these activities from any AI chat interface and so on—may seem ironic, but that’s how these transitions occur.

The end game here is more ambient, with natural language interactions, and spread across multiple devices that will include wearables like glasses, rings, smartwatches, and so on, smart home devices, PCs, phones, and tablets, and whatever else. Meaning that instead of running an app on a particular platform, one will have access to these agentic capabilities from just about anywhere in time.

? 2024: Why the NPU is so important to AI

At Build 2024, Stevie discussed why NPUs are so important to Copilot+ PCs and local AI. In doing so, he compared running the same AI workload against a CPU, GPU, and NPU, and demonstrated that the NPU was 32 times faster than the CPU and about 16 times faster than the (Nvidia) GPU. The point being that NPUs aren’t just about efficiency, they’re optimized for certain types of tasks, and are also much faster than CPUs and GPUs at those tasks. This shift represented a step function change for AI, he said.

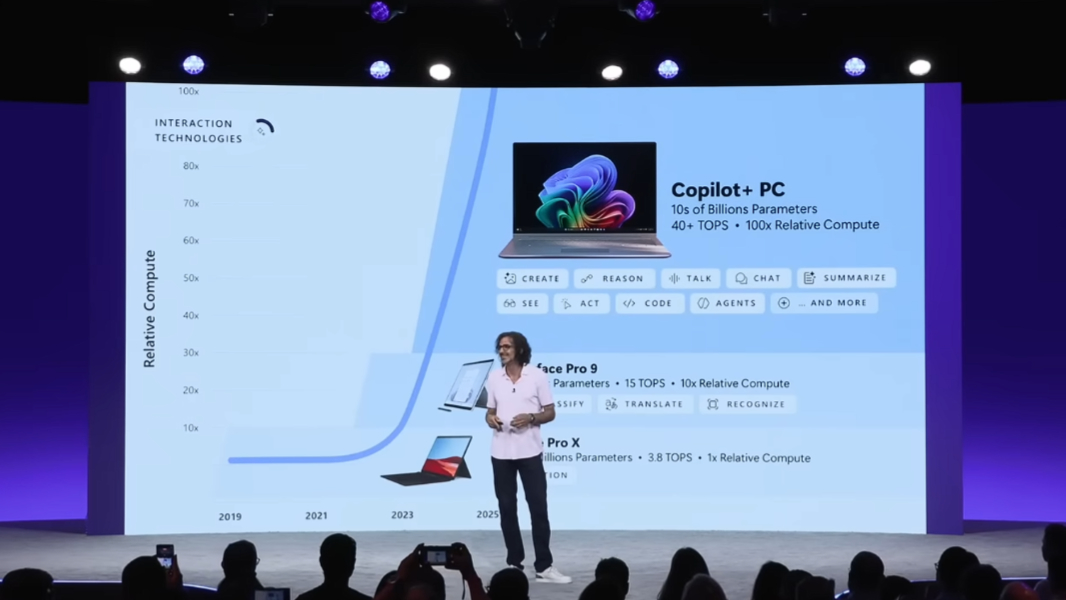

This talk occurred right after Microsoft announced the first generation Copilot+ PCs, which were all based on Qualcomm’s amazing and Arm-based Snapdragon X chipsets. Among other things, Copilot+ PCs must include a 40+ TOPS NPU to handle on-device AI tasks that interact with on-device small language models.

That Microsoft was eager to offload as much AI processing as possible from its overburdened datacenter servers is obvious. But the benefits of local AI, or hybrid AI, in which local and cloud AI can be used interchangeably, can be difficult to pin down. The most successful use cases to date have been with cameras, on both phones and PCs, but subsequent gains are very specific even to the individual feature level in various apps.

The result is a problem for marketers as there is no such thing as a universal “AI killer app.” Instead, we see thousands—and, soon, millions—of tiny individual gains across whatever apps we use on PCs and mobile. Even more problematic, most users will never even know that local AI made a specific feature work better or faster. It will just happen. And so local AI and the NPUs that power it have very real perception problems that continue to this day.

? Stevie at Snapdragon Summit 2025

Flash forward about 16 months, and Stevie once again found himself onstage, this time in front of the media and enthusiasts at Qualcomm’s Snapdragon Summit this week. He appeared about 55 minutes into the Product announcements keynote, and after Qualcomm’s Kedar Kondap had introduced the Snapdragon X2 Elite and X2 Elite Extreme processors that will power the next generation of Copilot+ PCs. Among other advances, the X2 features an NPU capable of 80 TOPs, almost double the speed of the 45 TOPS NPU in the first generation chips. This, Kondap had said, would allow for concurrent agentic AI workloads on future Copilot+ PCs.

Stevie started off his talk with some hard numbers that I assume most in the audience didn’t truly understand. (Yes, I include myself in that list.)

Copilot+ PCs, he said, were responsible for an overage of over 1.4 trillion “inferences” per month, a measure of the number of times their NPUs have collectively answered a question or otherwise come to some conclusion using new data, meaning data that the underlying AI model was not trained on. These NPUs did this work 100 times more efficiently than would have been possible on other chip types (CPU or GPU), in the latest example of what he called a “historic pattern” in which our expectations change because of advances in our devices and the experiences they provide. In this case, high performance compute is combined with low power consumption.

(He didn’t reference this, but the slide behind him at the time also noted that Copilot+ PCs conducted an average of over 73 billion semantic searches each month.)

“We’ve his pattern before,” he said. “It happens over and over again, defining each epoch of computing, spanning many decades, even to the era when computers were mechanical. You have a step change in compute ability, and that enables us to write software that changes how you interact with your computer. And ultimately involve the computer itself and how you use it and where you use it. That step change in compute has been driven by a combination of scaling laws across hardware, software, and AI.”

He then referenced Moore’s law, a prediction made by Intel cofounder Gordon Moore in the 1960s that the number of transistors on integrated circuits would double every year (later revised to every two years). This was roughly true over the ensuing five decades, but the even more rapid advances we see with NPUs have exploded past this trend, Stevie said.

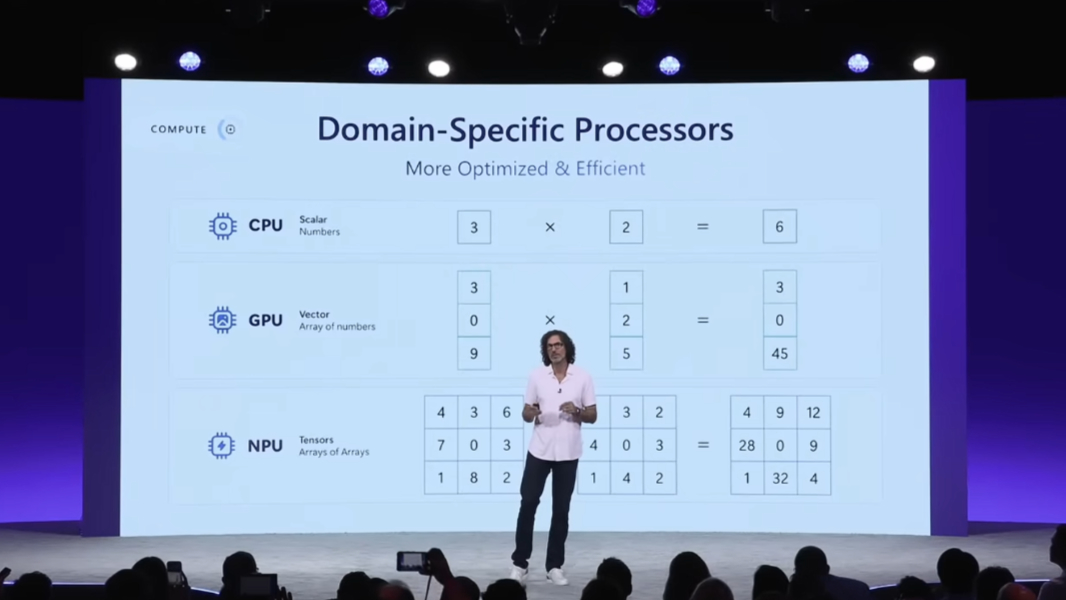

NPUs are optimized to work on tensors, the number structure behind neural networks, he said, with a slide behind him explaining that CPUs are optimized for numbers, GPUs are optimized for arrays of numbers, and NPUs are optimized for arrays of arrays. CPUs and GPUs ignited computer-aided design (CAD) in the 1990s, but NPUs provide “a level of efficiency and speed like none other.”

“On the software side of things, NPUs are driving techniques like quantification. This enables us to make models drastically smaller, all without losing quality.”

He then demonstrated a Microsoft prototype of a 2-bit version of Phi Silica, one of Microsoft’s on-device SLMs, running on Snapdragon X silicon. It offers the same performance as the shipping model, but it runs faster, uses half as much RAM, and requires less power. This is an important step in evolving on-device models to be much more powerful than is the case today. As an example, Stevie showed that a 14 billion parameter model that Microsoft had created exclusively for the cloud can now run locally using Snapdragon silicon, something the company thought to be impossible a year ago. It runs just as well on battery, too, thanks to one of the unique strengths of Qualcomm’s PC chip architecture.

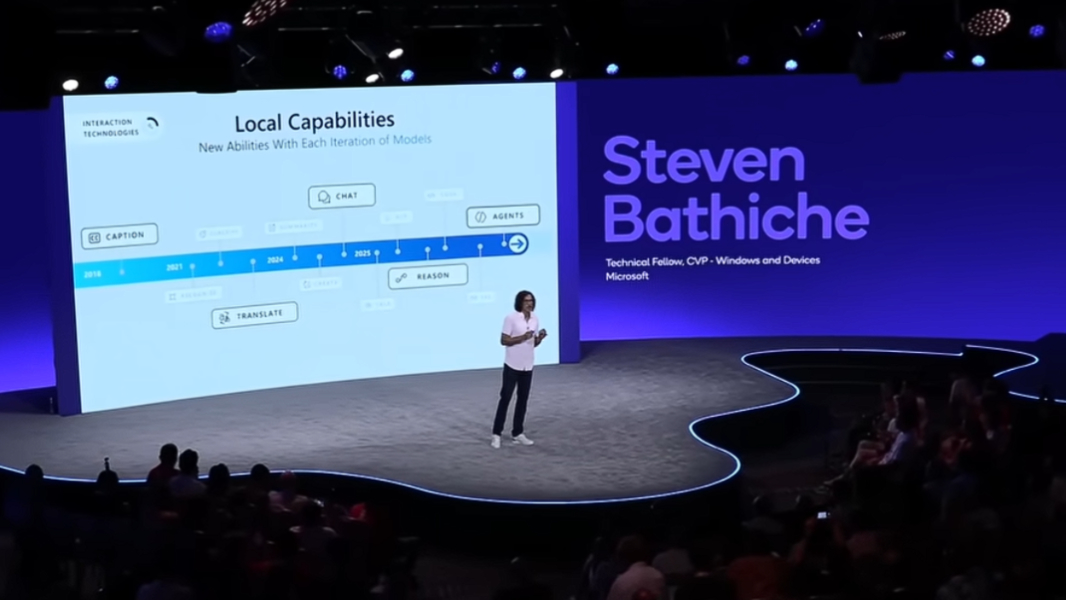

The speed at which AI has improved in recent years is impressive or even scary, and Stevie charted the progress, from live captioning in 2019 to translations, chat, reasoning, and now AI agents. But there was also a corresponding chart showing how NPUs in Qualcomm-based PCs had improved over these years as well. There was the Surface Pro X with its 3.8 TOPS NPU and 1x relative compute, the Surface Pro 9 with its 15 TOPS NPU and 10x relative compute, and then the first round of Copilot+ PCs with 40+ TOPS NPUs and 100x relative compute, each delivering more and more capabilities.

All this change, over such a short period of time, is what enabled Microsoft and its hardware partners to create the Copilot+ PC, a new class of PC and a step function change for the platform. But Microsoft, like many of us, has long been interested in what comes next, the so-called “next wave” of computing. And while there have been many half-steps and even pretenders to this throne, it’s never really been clear where we’re heading. Some believe, for example, that XR glasses may be it, or some other specific hardware platform. But Microsoft has a different take on the future.

“At Microsoft, we believe that the next computer is not any single thing at all,” Stevie said. “It is a system, orchestrated by the cloud, operating as one at the edge [meaning client devices like phones, PCs, and wearables]. And that’s ultimately what we are building. It is a system on top of systems to help you do more. It is cloud technology working with your local devices delivering next-generation computing, working as one, bringing security, speed, and intelligence to you. And it is that system that our agents and next generation software and devices will operate. It’s so important for you to understand.”

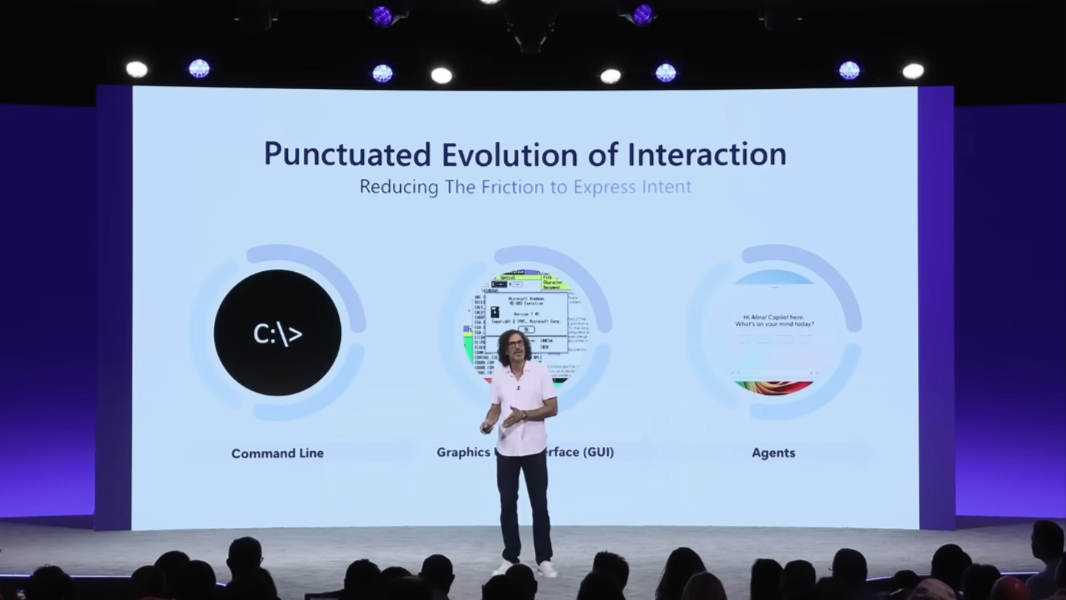

“We are at the precipice of agent-driven applications,” he said, concluding his talk, “which means what you ask for or, what you wish is what you get.”

Here, he is drawing a comparison to the “What You See Is What You Get” (WYSIWYG) era that was enabled by graphical user interfaces (GUI) on PCs starting in the 1980s. With AI, in other words, this is shifting to “What You Ask For Is What You Get” (WYAFIWYG).

?️ Q & A with Stevie

So that’s all very exciting and everything, but I was excited to have the chance to speak with Stevie as part of a small group of media after the keynote. Here are a few of the discussions that I think were most relevant.

? Stevie’s favorite Copilot+ PC features

Stevie’s answer to a question about his favorite Copilot+ PC features surprised me a bit, in that I figured he would mention Click to Do. He didn’t, though two of his choices are on my short list as well. He said that semantic search was “super powerful,” thanks to the natural language interactions and local and cloud file accessibility. And that the Settings agent illustrated how you could take a model, apply it to a complex interface, and make it so simple that anyone can use it.

But he also held up Co-Creator in Paint as a favorite, stating that it was an example of “directive AI,” rather than generative AI in which you describe what you want and then get an answer that you may or may not like, often requiring multiple refinements. With Co-Creator, you can instead describe individual strokes (of a pencil or pen) in a cooperative interaction rather than ask/answer. And … yeah. I had never really thought of it that way, in part because I was distracted by the relatively low quality output compared to cloud AI-based image generation.

“That’s a really powerful concept,” he said.

?️ Concurrent AI processing in an 80 TOPS NPU

It’s not difficult to imagine how a more powerful NPU can do more, generally speaking. But the notion of “concurrent AI” brings up images of the current units processing AI in a single-threaded way, or in a serial fashion. That’s not entirely true, since today’s NPUs can already multitask, so to speak. But as we move into this 80 TOPS era, what we’re seeing is the ability to interact with multiple models simultaneously as before, but also with the same model multiple times at once, where each tasks is specialized for whatever use case.

?️ AI agents are the “outside app” structure

Someone else brought up Stevie’s Build 2023 talk that I always reference, noting that while the beside and inside app structures he mentioned then had happened, it seemed like the outside app structure had not. I already knew the answer to that, but Stevie correctly noted that AI agents are, in fact, the outside app structure, it’s just that we’re in the early stages of that right now.

The problem here is manifold, but we’re also working through how to update existing apps for AI, whether it be with inside app or outside app structures. Photoshop would never look like it does if it were invented today in the AI era, he said, and I think you could apply that thought to just about any complex legacy app, including those in Microsoft Office. But when you think about agents doing things on your behalf, you also have to deal with a future in which Photoshop as a UI doesn’t exist anymore either. The interactions get very different and then the app as we know it today starts to disappear.

?️ Orchestration and the future of Windows

A few other people also talked around the topic I really wanted to get to, including a question about whether it would be possible for Windows to offload non-AI tasks to the NPU as a way to implement multi-chip processing. Stevie said this would be like using a butter knife to eat a steak, in that it was possible but not optimal. It’s easy to fail over from NPU to GPU and then from GPU to CPU, he said. But the reverse was a non-starter: Each chip is optimized for specific workloads.

This was my cue to ask about orchestration.

I told Stevie that AI interactions in which the user picks a model from a list in a picker feels primitive and that what’s needed is the orchestrator he discussed two years ago. Would Windows become that orchestrator and abstract these tasks for users and agents?

Obviously, Stevie wasn’t going to divulge exactly what’s happening in the future with Windows or Microsoft more generally. But he did say that there were different ways of interacting with models and that some number of companies might all use an identical model but get different results by orchestrating those models for their specific needs. Others might use a technique called MOE (Mixture of Experts) to selectively activate parts of the model and, in doing so, evolve that model.

“I’ll give you an insight that might be important,” he said. “There’s this interesting trend that I’ve seen over the past year or two. The large (language) models end up eating the structure around them, including the orchestrators.”

Here, I had to interrupt him to be sure I heard that correctly. Eating the structure around them?

“Yes. I think that’s a really important concept for people to understand. When the first foundation models came, people wrote all this code around them. And that code ended up being brittle and unsophisticated in some ways, and complicated. And so people said, why don’t we just keep that structure and train it back into the model? Some of these orchestrators in the beginning were so complicated and large and caused the thing to be slow. And they’re, like, why don’t we just put it in the model? One way that we talk about this is to call it ‘model first’ or inline. And you see that happening over and over again.”

“The best orchestrators I’ve seen are actually the simplest,” he continued. “That’s an important trend, and I think that we’re going to continue to see more complexity that’s eaten up by the model because the mathematics behind the model can do a better job than traditional code.”

Yes, this makes my hurt, too. AI continues to be a complex beast, full of new terminology and concepts, even for those who live in this world every day. And yes, I still have so many questions. But there are some interesting hints in there, I think, that point to where Microsoft is going with AI in Windows, especially.

Gain unlimited access to Premium articles.

With technology shaping our everyday lives, how could we not dig deeper?

Thurrott Premium delivers an honest and thorough perspective about the technologies we use and rely on everyday. Discover deeper content as a Premium member.