Microsoft Releases its First Xbox Transparency Report

- Laurent Giret

- Nov 14, 2022

-

0

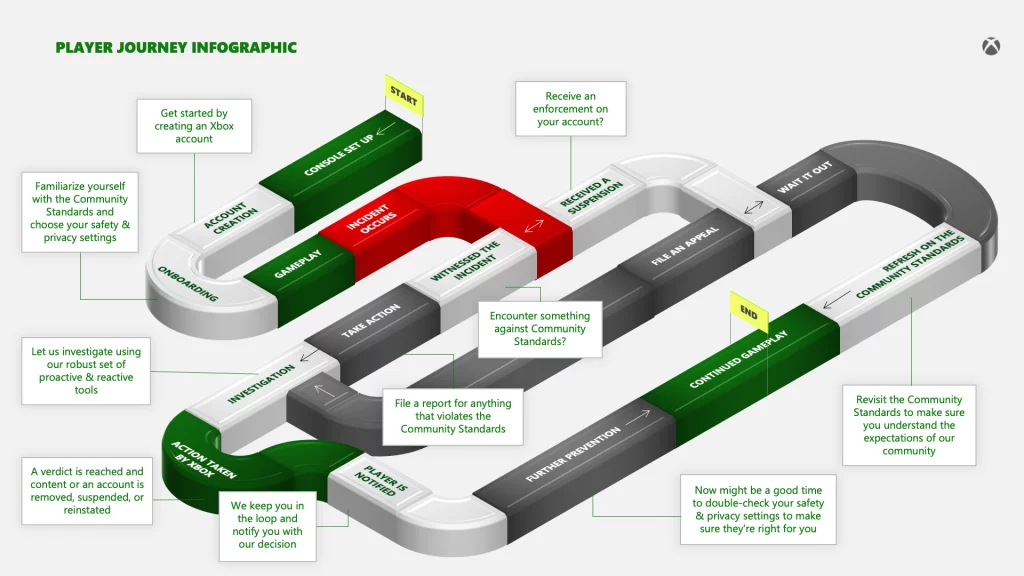

Microsoft published today its first Xbox Transparency Report, which details the company’s efforts to make the Xbox network a safe environment for Xbox players. The software giant has committed to releasing new reports every six months to allow the community to track the company’s progress on content moderation and safety features.

“This report compliments the continued review of our Community Standards, making it easier for all players to understand what is and isn’t acceptable conduct; continued investment in moderation tools; and ongoing partnership with industry associations, regulators, and the community,” wrote Dave McCarthy, CVP Xbox Player Services.

Microsoft has a team of content moderators working every day to take proactive safety measures on the Xbox network and protect gamers from negative content before it can reach them. This team also acts on reports from Xbox players being confronted with toxic behaviors on the Xbox network.

Here are the main highlights in this first-ever Xbox transparency report for the first half of 2022

Proactive moderation: Microsoft issued more than 4.33M proactive enforcements against automated or bot-created accounts, which represents 57% of the total enforcements during the period. Microsoft’s proactive moderation is also up 9x from the same period last year.

Reactive moderation: Xbox gamers submitted over 33 million reports during the period (down 36% year-over-year), with 46% of them about communications and 43% about conduct.

Enforcements actions: Microsoft took over 7 million enforcement actions when a violation of its community standards has been determined to have taken place during the period.

Enforcements by policy area: Cheating/inauthentic accounts were the biggest source of enforcement (4.33 million), followed by profanity (1.05M), and adult sexual content (814K).

Enforcements by actions taken: The majority of enforcement actions are account suspensions (63%), followed by account suspension plus removal of the content (34%), and content-only enforcement (34%).

Last year, Microsoft acquired Two Hat, a content moderation solution provider that has been working with Microsoft in recent years on improving proactive moderation on the Xbox platform. However, the company plans to increase its investments in content moderation to make the Xbox network a safer space for all gamers.

Phil Spencer, CEO of Microsoft Gaming tweeted today that the release of this first Xbox Transparency report was “an important milestone” for the company. “Being transparent about impact on and for our players is the right thing for us to do,” the exec emphasized.