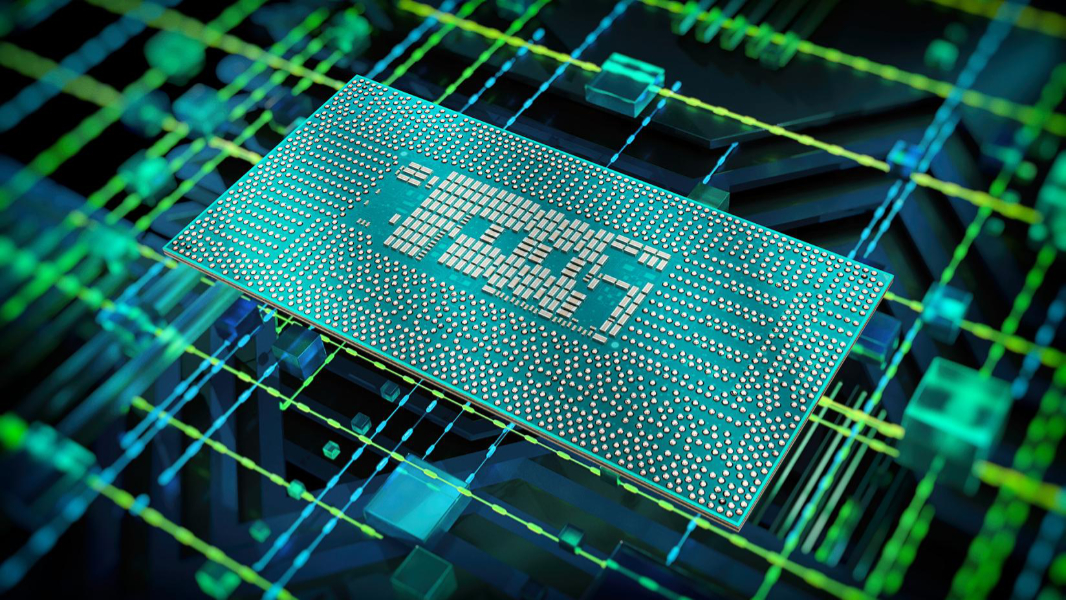

12th-Generation Intel Core Chipsets Come to Mobile PCs

- Paul Thurrott

- Jan 04, 2022

-

42

Intel today announced 28 new 12th-generation Core mobile processors along with an additional 22 desktop processors. The new chips will appear in PCs throughout 2022.

“Intel’s new performance hybrid architecture is helping to accelerate the pace of innovation and the future of compute,” Intel executive vice president and general manager Gregory Bryant said. “And, with the introduction of 12th generation Intel Core mobile processors, we are unlocking new experiences and setting the standard of performance with the fastest processor for a laptop – ever.”

That faster-ever mobile processor is the flagship 12th-generation Intel Core mobile CPU, the Intel Core i9-12900HK. Built on a 7-nm process, the Core i9-12900HK utilizes Intel’s new hybrid architecture, with both performance cores (P-cores) and efficiency cores (E-cores) and intelligent workload prioritization and management distribution. It will be available at frequencies of up to 5 GHz with 14 cores (6 P-cores and 8 E-cores), and it offers a 28 percent performance improvement over its predecessor.

Intel also introduced various 65- and 35-watt 12th-generation Core desktop processors aimed at gaming, creation, and productivity that are available now. And it disclosed its plans for upcoming 12th-generation Core U- and P-series mobile processors that power new ultra-thin-and-light laptops.