From the Editor’s Desk: The Future is Now (Premium)

- Paul Thurrott

- Jul 14, 2025

-

14

The personal technology market is perhaps best defined by a steady churn of change. But there are so many examples of tech products, software, and services that have remained largely unchanged for a long time. And yet here, in 2025, these things are starting to change. And it’s worth evaluating how these changes might impact us and how we get work done daily.

Of course, everyone’s job is different, as are the skills and perspectives that each individual brings to the table. So rather than speculate on how others might get work done, I can more accurately explain how my own workflows are being impacted by these changes. And maybe some of this will resonate with you as well.

? Baby steps with AI

I’ve written and spoken about my curiously slow move to using AI for work. But there are some exceptions, and I expect to keep evolving my use of AI.

The most obvious exception is one that will be familiar to anyone who reads Thurrott.com: I use AI, almost always Microsoft Designer, to create the hero images for articles when I want something unique. These are often of a certain style, of course, but the benefits are obvious enough: I get a unique image, which is not the case when I use stock photography. And AI gives me multiple choices, which I can fine-tune when needed.

But here’s another example that is less obvious. I often quote from executives or presenters at the companies we cover, and that’s easy enough if the information arrives via a traditional press release (which is less common with each passing year) or blog post. But a lot of information is delivered via video, too. This can be something canned, or it can be a recording of a live event.

In the before times, I routinely underwent a tedious process by which I would have to start and stop the video I was trying to decipher. And it was tedious. I’d cue up the video to as close as possible to the point the quote I needed started, which YouTube in particular offers no help with. Tap Space to start the video playing. Listen to it. Tap Space again after a short while. Switch over to whatever writing app I was using. Write what I thought I heard. Switch back to YouTube (or whatever). Tap Space again. Rinse, wash, repeat, yes, but I would also have to go back a few seconds if I misheard or mistyped something. Which, again, YouTube in particular is unhelpful with.

YouTube added AI-based transcripts to most videos. And that helps a lot, though I still need to listen to the video at the point I’m quoting because those transcripts are sometimes a bit off. But it’s still a nice time saver. (I used this most recently in my weekend story about the new Commodore which, for now, doesn’t have a blog or press site.) My wife uses audio transcription capabilities in Word and other tools to create transcripts of the audio recordings she makes of interviews. And in both cases–the videos I’m using or her interview transcripts–AI is very useful for generating summaries. She does this more than I do, but I’m getting there.

Here’s another one, with a recent and more specific example. The Snipping Tool in Windows 11 is one of a handful of in-box apps that has evolved dramatically in recent years thanks to AI, and in overtly positive ways. And I use one of the many tiny-ish new features in Snipping Tool more than I’d expected: You can use it to copy the text out of an image to the Clipboard and then paste that text into Notepad or whatever writing tool you use.

I used this capability over the weekend to turn an image version of a quote about HMD pulling Nokia phones out of the U.S. market into text.

I know. These are not dramatic AI adoptions by any measure, but they do measure up to the bar I established earlier this year: At a high level, AI is about saving time.

? Welcome to the post-PC world

I’ve written so many words about the iPad not living up its initial promises over the years and of my growing frustrations recently that Apple was holding it back artificially. There’s no reason to recount all that here, in part because anyone reading this is likely familiar with that drama, and in part because this nightmare is suddenly and unexpectedly over. At WWDC 2025 in June, Apple announced that it would bring Mac-like multitasking capabilities to the iPad–to its UI and back-end–that literally answered all my previous complaints. Testing these capabilities on my iPad Air, first with an external keyboard and mouse and then with Apple’s Magic Keyboard, confirmed this. We’re about to enter a brave new world.

But a computer, as I think of it, isn’t just a laptop form factor device with a large screen, a full-sized keyboard, and a touchpad, mouse, or other pointing device. We have desktop PCs, NUCs and other mini-PCs, and other form factors too. I do use some desktop-type PCs, but I long ago switched to what I call a More Mobile setup where I use a laptop with a Thunderbolt or USB dock/hub at a desk, where it can be tied to power, an external display, a full-sized desktop keyboard and mouse, a webcam, and other peripherals using a single USB-C cable. This is a best-of-both-worlds scenario of sorts. The laptop is a laptop on the go. But it’s also like a desktop or mini-PC when used with dock or hub.

Now that the iPad is maturing to take on more laptop-like capabilities, it’s clear that many, many users will be able to replace an aging Windows laptop or MacBook with an iPad. Even I could probably make it work. That article about me using my iPad Air with the iPadOS 26 developer beta and a Magic Keyboard was also written on the iPad Air in that configuration as a nice proof point. I used iA Writer to write the text and Safari for iPad to post it to Thurrott.com. But what I didn’t do was access, edit, or post any of the images I needed for that article to the site. I used my PC for that. I figured I’d like at that eventually.

And I did. The other day, I was sitting here on the couch, reading whatever article in the morning, and two thoughts occurred.

I have, to date, used iPads almost exclusively in portrait mode, and one of the side-effects of the Magic Keyboard or any similar type cover is that it’s usually limited to a landscape orientation. I’ve been using the iPad exclusively with the Magic Keyboard since it arrived, but I brought its Smart Folio case here to Mexico just in case. The thing is, I’m getting used to it. With a few exceptions, most of the apps I use work identically with touchpad gestures, which makes navigating between and within apps seamless. And I’m surprised to say I’m getting used to landscape orientation for reading.

The second thought I had was that I own or at least have access to sophisticated image editing apps for the iPad. So there was no reason to wait. I downloaded Affinity Photo for iPad, which I own, and Adobe Photoshop for iPad, which I get as part of a lower-tier Adobe CC subscription. And in using each in turn, it was obvious that the either would work well for the image editing needs I have for Thurrott.com images. Clearly, I could write articles on an iPad, edit the images for those articles on an iPad, and then post them to Thurrott.com … on an iPad.

So that’s interesting. And, who knows? Maybe that’s something that will happen in an emergency. Or maybe this proceeds to the point where using an iPad on the go for work and play makes sense. My iPad is a bit small at 11 inches, but even a 13-inch iPad Air or Pro is smaller than I’d like for writing and other productivity work. Will the advances in iPadOS 26 lead to bigger iPads? I suspect it might. And I have to say, a 15-inch or bigger iPad Air or Pro is quite compelling all of a sudden.

But that’s for the future.

It’s clear based on what I know that an iPad can be used in a More Mobile-ish setup today, and more elegantly when iPadOS 26 arrives. It has long supported Bluetooth keyboards and mice and other wireless peripherals. It has long supported USB-C for expansion purposes. And in recent years, it has supported a single external display. (If you’re a Mac user, you can also seamlessly use an iPad as a second display.) So what is that experience really like?

To find out, I connected my iPad Air to the Thunderbolt 4 Dock that I use here in Mexico. And then, later, to the Anker USB-C hub that’s so useful that I think we now have four of them between my wife and me. (Two each for PA and Mexico, plus I sometimes travel with one too.)

Each worked. And each worked identically in the sense that each of the peripherals I connected to either device worked–or did not work–in the same way. Those peripherals are:

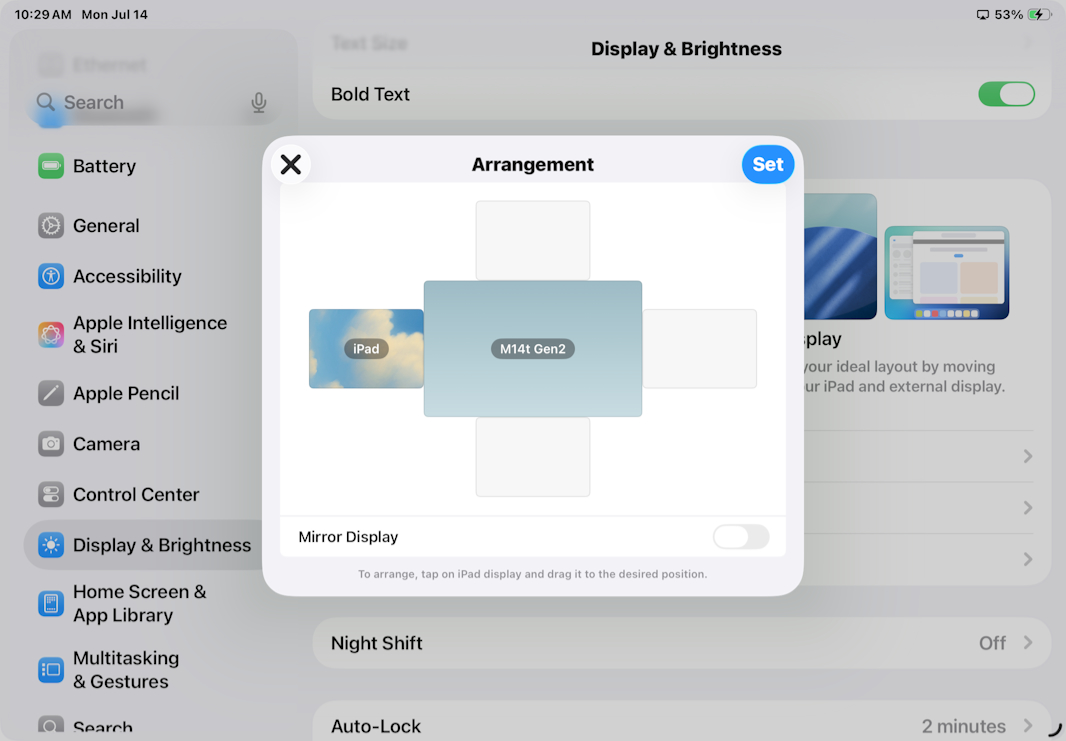

External display. I have been using a 24-inch Dell monitor in Mexico since last year, I think, but I was finally able to bring a 15-inch USB-C display here for this trip, so I’ve been using that. When I connected the iPad to the Dock/hub, the display lit up immediately and mirrored the display on the iPad. This isn’t ideal in the sense that the external display has a different resolution and aspect ratio than the iPad display, so there are black bars. ut here’s the good news: In Settings > Display & Brightness > Displays, you can switch from “Screen Mirroring” to “Extended Display” and then configure their relative physical arrangement. And then it works exactly the way any multitasker would want. Nice!

Microsoft Sculpt Keyboard and Mouse. My Microsoft Sculpt Keyboard and Mouse connect to the Dock/hub (and thus to the computer) with a USB-A dongle. And these worked immediately as well. The keyboard has a Windows-centric layout, of course. But it works fine, and do mouse features like scrolling and right click. I can use the Windows Key + Space keyboard shortcut to bring up Spotlight, search for an app I want to run like Affinity, and then drag the app window from the internal display to the external display with the mouse if needed. It’s not perfect–Affinity, for example, won’t go truly full-screen on the external display and instead appears to be mimicking the aspect ratio of the iPad display–but it is is impressive. And quite usable.

External webcam. I have a 4K Dell webcam attached to the Dock/hub over USB-A and this doesn’t appear to work with the iPad. I’ve only tried a few apps–Apple’s Camera app, of course, but also the Google Meet web apps–and in each case, they can see the iPad’s front- and rear-facing cameras. This isn’t a deal-breaker, given the quality of the iPad front-facing camera I would normally use. But it would require me to mount the iPad on a stand or whatever so it was better placed.

USB microphone. I use an ATR2500x-USB cardioid condenser microphone here in Mexico for podcasts and work calls, and it attaches to the Dock/hub via USB-A. From what I can tell so far, this, too, does not work with the iPad. There are no audio/microphone switching controls I can find, in various apps or in Settings. That is a bit of a showstopper, obviously, but I also believe it to be app-specific. And my understanding is that a microphone that attaches directly to the iPad’s headphone/microphone jack would work more broadly. I do have a set of RODE Wireless-GO microphones that I’ll test soon.

This continues to be a fascinating development.

? Notepad as a Markdown editor

When Microsoft awkwardly wedged Copilot into Windows 11 a few years back, the community collectively whiplashed with fears of enshittification. This was warranted for all kinds of reasons, and Copilot remains an open question mark of sorts when it comes to its integration in Windows 11.

But let’s be fair. Some of Microsoft’s other efforts to bring AI to Windows 11 are much more successful. Clipchamp remains a wonder. The writing tools in Notepad are fantastic. The image editing capabilities in Paint and Photos are mostly terrific. And the evolution of Snipping Tool is surprising in that this tool went from superfluous to necessary almost overnight (with one reason cited previously in this article).

Touching classic tools like Notepad and Paint is fraught with drama. Things got off to a bad start with Paint, which was initially modernized with only Light mode support and a bunch of broken keyboard shortcuts, but Microsoft course-corrected in time, and Paint is excellent again today. Notepad has been more successful. For all the handwringing, each of the additions that Microsoft has made to Notepad in recent years has been well-done, and you can disable any of the AI features if you don’t like them for whatever reasons.

But the addition of Markdown compatibility, now in preview, has triggered some ridiculous protestations. Part of the problem is that the feature is misunderstood, thanks in part to Microsoft’s poor communications. Part of it is just old-fashioned, knee-jerk reactionism: Our aging community is change-averse and doesn’t like to see a classic tool like Notepad, which in their eyes should never evolve, getting new features. I get it, honestly. But as a daily user of Notepad, I’m here to tell you that this app is better than ever. And again, if you don’t like something, virtually every functional addition can be disabled.

All that said, the new Markdown feature is particularly compelling to me. I’ve changed the way I write in fits and starts over the years, but I first wrote about my use of Markdown almost 7 years ago and I see this plain text format as a key component of my DIY personal productivity tech stack. Markdown is both human-readable and machine-readable, and it will never be obsolete. More to the point, I use it every single day. To write books. To write articles like this one. To take notes. For anything that is writing-related.

How I do that has shifted a bit over the years, and I expect that to continue. I use Typora for general writing, as with this article, though I prefer iA Writer in some ways, and that app works better on the Mac. I use Markdown syntax when I take notes in Notion. I use Markdown in Visual Studio Code when I write books. Could I use Notepad for any of this? How incredible would it be to use Notepad, a free tool that’s been built into Windows since 1985, for writing?

This is appealing for all kinds of reasons. In Giving In: Microsoft Edge (Premium), I noted that using an in-box Windows 11 app is a huge time-saver for me since I review so many laptops and have to install, configure, and maintain all the third-party apps I need, Using Edge means I don’t need to install or deal with other browsers. Could I use Notepad and don’t install or deal with any of those other apps?

No, not yet. My workflow requirements are very specific, and one of the things that makes Typora perfect for me is that it has a way to output Markdown as perfectly clean HTML that is ideal for importing into WordPress, the back-end for this website. Other apps I would otherwise consider do not. And, as it turns out, neither does Notepad. So I would need to use a WordPress plug-in for Markdown-to-HTML conversion if I wanted to use Notepad.

If it weren’t for that, I could almost make it work. Notepad supports all the Markdown features that I need for articles, though it doesn’t support some of the special features I need for my books. (Leanpub uses a Markdown variant called Markua, and I need inter-document linking capabilities and other esoterica.) It works pretty well, too, aside from some spacing issues, and I quietly wrote a few articles for the site in Notepad last week for testing purposes. Export to WordPress remains a problem. But what the heck, it has built-in spell-checking. And your requirements will be different from mine.

? Web browsers are entering the AI era

I’ve been somewhat obsessed with web browsers and why they’ve not been modernized in any major ways in recent years despite their importance. And I’ve written enough about this topic that I won’t rehash it all here. What I would like to do, however, is plant a seed of an idea in your brain that points, I think, to an interesting future.

Consider the conservative way in which Microsoft has added AI to Microsoft Edge so far. For the most part, the interface is a Copilot sidebar in which you can be viewing an article or video on the web and then interacting with it in some way in the sidebar. This is the literal embodiment of the “copilot” concept in which you do these things side-by-side. And it’s fine. It works pretty well, with the understanding that this type of interface makes the most sense when commingling legacy apps like a web browser, or Word, or whatever, with new AI capabilities.

A better approach is to more seamlessly integrate AI capabilities into an app. This will happen over time with some of the more popular legacy apps, starting with creator apps like video editors, where doing so is straightforward. But this will also happen with new apps. And in the web browser space, the biggest innovations we’ve seen so far have come from the most unlikely of sources, a tiny company called The Browser Company which perhaps went a little too radical with its initial offering, Arc browser.

The Browser Company ran into a complexity wall with Arc, however, and so it started over with Dia. This is a simpler, more traditional browser (for now) from a UX perspective, which will help with switchers. But it also has deeply integrated AI features that are truly compelling. The problem is that Dia is still in early preview, and it’s only on the Mac for now, limiting its appeal further.

I use Windows every day, not the Mac, but I’ve been trying to use Dia on my MacBook Air when possible to get a feel for its unique new features. The only thing that’s clear to me now is that I’d need to use this more to truly understand that. But I read an article in The New York Times recently that provided an interesting an eye-opening answer to a question I and others have had about Dia. What AI model(s) is it using? And why should anyone use this instead of ChatGPT or whatever else?

As it turns out, Dia is doing that thing I’ve been openly speculating about for over a year: It provides orchestration capabilities so that you, the user, doesn’t have to manually choose a model when getting work done. That is, when a Dia user asks the browser a question, Dia “analyzes it and pulls answers from whichever A.I. model is best suited for answering.” That is orchestration, and it’s the right approach. When you use Edge, you get Copilot. When you use Perplexity’s Comet, you get Perplexity. But what we really want, almost literally all of us, is the right answer, or the best answer. And, yeah, we could use different AIs ourselves and waste our time. But the very point of technology, the very point of AI, is to save us time, remember? And that’s what this is doing.

Dia isn’t ready for mainstream use yet, especially if you’re a Windows user. And I am not saying that Dia is the answer per se. What I am saying is that it’s an example of the answer. That is, while we could keep strapping ourselves to whatever Big Tech ecosystems and just hope and assume that that company will do right by us, that’s stupid. We need to look out for ourselves, and when it comes to AI, there is no one AI that will be best for everything. Instead, we need orchestration working on our behalf to intelligently choose the right AI for the job in the moment. And that orchestration is not going to come from Google Chrome or Microsoft Edge. It’s probably not going to come from Apple Safari either, unless Apple keeps flailing with AI and is forced down that path. Which won’t benefit us on Windows regardless.

Put more simply, the best web browsers of the future are almost certainly going to come from third parties, and not platform makers like Apple, Google, and Microsoft. That’s arguably true today. But it will be even more true as browsers transform into AI front-ends. Which is very much what is happening right now.

So, yeah. This one is a lot more future-leaning. But it is happening. And I think we all need to pay attention to this to see where it goes.

Gain unlimited access to Premium articles.

With technology shaping our everyday lives, how could we not dig deeper?

Thurrott Premium delivers an honest and thorough perspective about the technologies we use and rely on everyday. Discover deeper content as a Premium member.